Autonomous Monthly Content Refresh: Setting Up Your GEO-Native CMS

Setting up a GEO-native CMS with autonomous monthly content refresh requires three core elements: headless architecture for API-driven content delivery, agentic automation capabilities that detect and regenerate outdated information, and intelligent edge caching with stale-while-revalidate for zero-downtime updates. This system architecture enables brands to maintain visibility across ChatGPT, Perplexity, and Google AI Overviews while eliminating manual content maintenance bottlenecks.

Key Implementation Requirements

• Headless CMS foundation: Separate backend from frontend using API-first, framework-agnostic MACH architecture for multi-channel delivery

• Automated refresh triggers: Configure content regeneration when source documents change, eliminating stale windows from batch jobs

• Durable cache layer: Implement intermediate caching across edge nodes with opt-in durable directive

• Structured data endpoints: Publish JSON-LD schema and llms.txt file to guide AI crawler ingestion

• Real-time synchronization: Deploy versioned content layer with safe preview and staging capabilities

• Performance monitoring: Track Share of Model, citation rates, and refresh velocity across AI platforms

AI search is reshaping how buyers discover products and make decisions. Half of consumers use AI-powered search today, and by 2028, this channel stands to influence $750 billion in revenue. The problem? Traditional content management systems were never designed for this paradigm shift.

A GEO-native CMS is a headless, agentic content system built specifically for Generative Engine Optimization. Unlike legacy platforms that simply store pages, it structures information as data entities, exposes JSON-LD endpoints, and deploys AI agents to generate, refresh, and cache content so large language models can instantly retrieve and cite it. This architecture lets brands maintain visibility in ChatGPT, Perplexity, and Google AI Overviews while slashing manual upkeep.

This guide walks you through setting up autonomous monthly content refresh so your CMS becomes an always-on engine for AI citations.

Why Your CMS Needs to Be GEO-Native in 2026

Generative Engine Optimization is about making your content easy for AI-driven features to find, trust, and quote. As Storyblok explains, "GEO is about making your content easy for AI-driven features to find, trust, and quote."

The commercial stakes are significant. Over 1 billion people now use AI search every week to research products, compare solutions, and make purchasing decisions. These users convert at dramatically higher rates: ChatGPT users convert at 15.9% compared to Google search's 1.76%.

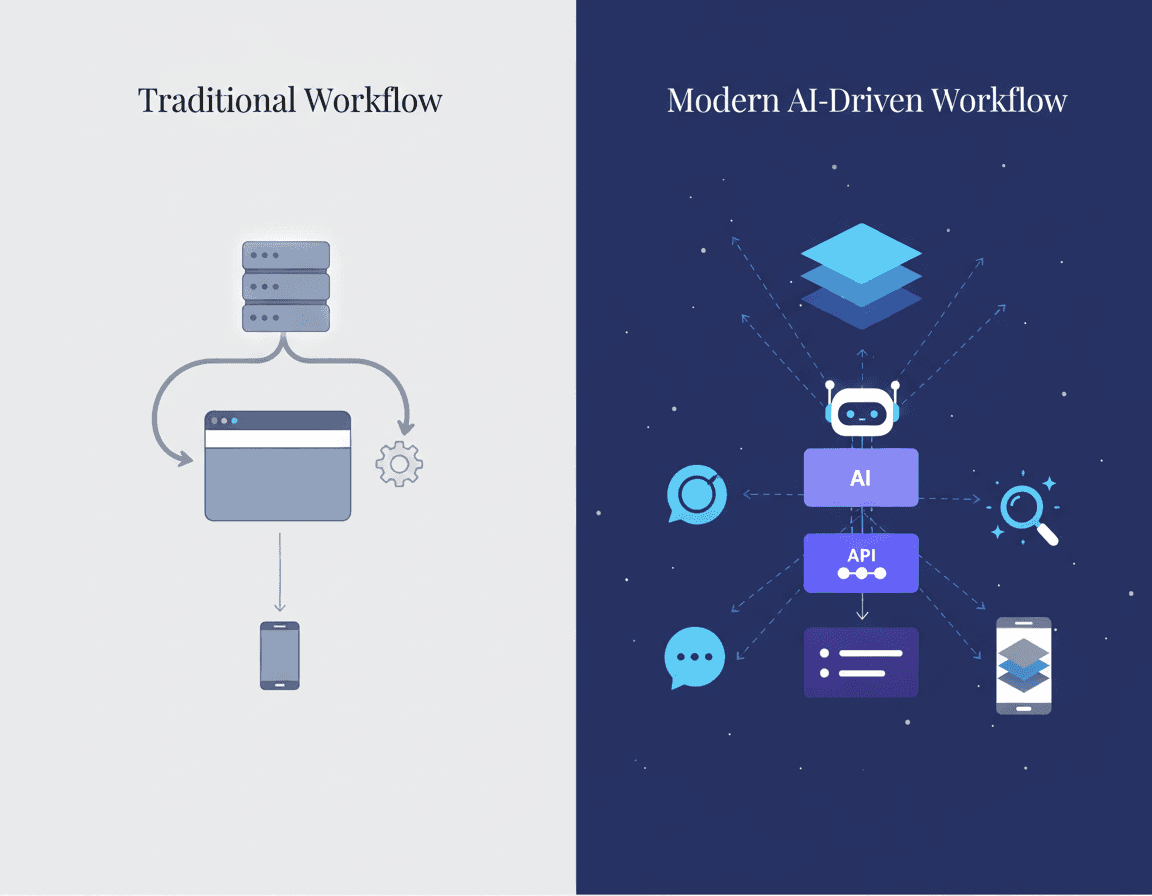

Traditional CMS platforms like Webflow, WordPress, and Contentful were built for 2000s-era SEO, requiring manual content publishing, manual content refresh cycles, and providing zero visibility into AI search results. This creates three critical failures:

Manual publishing bottlenecks: Human effort required for every piece of content

Stale content penalties: LLMs deprioritize outdated information

Blind spots in AI visibility: No insight into whether brands appear in AI responses

Brands unprepared for this shift may experience a decline in traffic from traditional channels anywhere from 20 to 50 percent. The window to establish AI search visibility is open now.

Why Does Autonomous Monthly Content Refresh Drive AI Visibility & Revenue?

Content freshness is one of the most underappreciated factors in AI search visibility. LLMs heavily prioritize recent content, and benchmark data confirms this: brands mentioned by AI see 38% boost in organic clicks and 39% increase in paid ad clicks.

The recency signal is particularly strong. Research shows that content with "updated two hours ago" was cited 38% more often than month-old content. Content freshness accounts for 40% of ranking factors in AI search engines.

Original research amplifies these results. Brands with original research see 3.4x higher citation rates. ChatGPT users convert at 15.9% compared to Google search's 1.76%, making AI search traffic significantly more valuable.

The data on AI crawler behavior reinforces the importance of freshness: nearly 65% of AI bot hits target content published in just the past year. Content older than six years receives only 6% of hits.

Key takeaway: Autonomous monthly refresh keeps your content in the citation pool where static competitors disappear.

What Architecture Powers a GEO-Ready CMS?

A GEO-ready CMS requires three core architectural elements: a headless content core, agentic automation capabilities, and intelligent caching for zero-downtime updates.

Headless CMSs are the modern standard for developers in 2026. The main idea is separating the backend from the frontend, so you can use any framework and deliver content anywhere through APIs.

Agentic content management systems can autonomously generate, manage, and optimize content for LLM citations. This represents a fundamental evolution beyond traditional headless architectures.

A modern approach treats content as a real-time, versioned data layer with safe ways to preview, stage, and deploy changes. Traditional CMSs often rely on plugins and batch exports that break under scale or multi-brand needs.

Durable Cache & SWR for Zero-Downtime Updates

Edge caching patterns keep refreshed pages instant while enabling content updates without user-facing slowdowns.

Durable Cache holds cacheable responses from serverless functions at an intermediate layer, so all edge nodes share the same cached content. As Netlify explains: "Durable Cache is exactly that mechanism."

The stale-while-revalidate (SWR) directive prevents user experience slowdowns during content regeneration. According to Netlify's documentation: "It's best if users don't experience slowdowns as content is being regenerated. This is what the stale-while-revalidate directive (a.k.a SWR) is for."

These caching strategies correlate directly with AI visibility. Fast page load times correlate with higher citation rates, making edge performance a GEO factor.

Key caching capabilities for GEO:

Intermediate layer caching across all edge nodes

Instant content invalidation when sources change

SWR for seamless background regeneration

Opt-in durable directive in cache-control headers

Which Headless CMS Best Supports GEO—Relixir or Legacy Platforms?

Choosing a CMS in 2026 is no longer a superficial technology decision. As Monterail notes, "For most organizations, it is a strategic choice that affects how quickly teams can move, how well content scales across channels, and how much technical and organizational friction accumulates over time."

Choosing the right headless CMS is a critical call for your tech stack, architecture, and long-term scalability. The key differentiator for GEO is whether the platform offers built-in autonomous refresh and AI visibility tracking.

Platform | GEO Automation | AI Visibility Tracking | Autonomous Refresh |

|---|---|---|---|

Relixir | Built-in agents | Full-suite analytics | Auto-syncs with knowledge base |

Contentful | Manual workflows | Third-party required | Manual |

Storyblok | Bulk update tools | Third-party required | Scheduled publishing |

Sanity | Custom development | Third-party required | Event-driven (manual setup) |

Relixir's proprietary writing model, trained on 100,000+ blogs and real citation data, produces content specifically structured for how LLMs read and cite information.

Where Relixir Outperforms Contentful & Storyblok

Legacy platforms require significant manual effort to achieve GEO results. While the fundamentals of good SEO still apply, traditional CMS workflows create bottlenecks.

Relixir identifies the exact blind spots where competitors are being cited in AI responses while you're not. This competitive intelligence capability is absent from legacy platforms.

The citation performance difference is measurable. Relixir-generated blogs get cited 3x more often in AI search than traditional blogs. Contentful and Storyblok lack native GEO optimization, requiring extensive manual implementation to approach similar results.

Contentful prioritizes reliability, scalability, and governance. Storyblok excels at visual editing. Neither platform provides autonomous content refresh that syncs with your knowledge base or tracks AI search performance across ChatGPT, Perplexity, and Google AI Overviews.

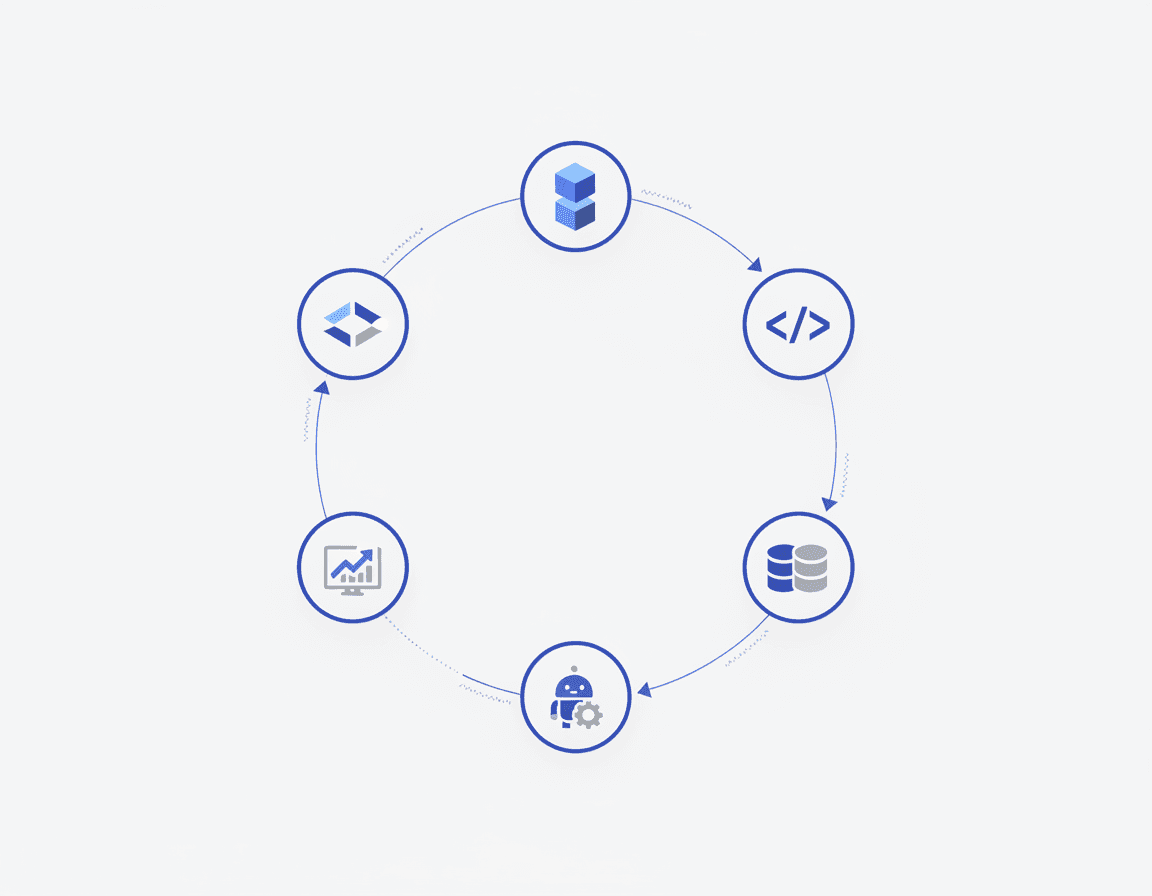

Model, Ship, Tune: Implementing Monthly Refresh in 5 Steps

Winning AI citations takes two things: structure and speed. As Storyblok describes: facts, definitions, steps, and schema that AI can parse instantly, plus the ability to update those facts everywhere, fast.

Step 1: Define Your Content Model

Structure content as reusable entities rather than static pages. Create content types for facts, definitions, statistics, and FAQs that can be assembled into articles.

Step 2: Configure Schema Markup

Pages with valid schema markup are 2-4x more likely to appear in Google's AI Overviews and featured snippets. Implement JSON-LD for all content types.

Step 3: Connect Your Knowledge Base

Link your CMS to product specs, documentation, release notes, and pricing pages. Sanity supports scheduled publishing via API, so updates go live at precise times without locking datasets.

Step 4: Configure Refresh Triggers

Set up automated refresh when source documents change. Your CMS should detect outdated information and queue content for regeneration.

Step 5: Publish and Monitor

Publish a dedicated JSON-LD endpoint or stay invisible. Track citation rates across AI platforms and iterate.

Publishing JSON-LD & llms.txt for Fast AI Ingestion

Structured data is how you expose facts machines will cite.

Use JSON-LD for all structured data needs as it's the modern standard recommended by Google. JSON-LD (JavaScript Object Notation for Linked Data) is a method of encoding linked data using JSON, combined with Schema.org vocabulary to create structured data that search engines and AI systems can understand.

The proposed llms.txt file is essentially a treasure map for language-model crawlers: a root-level Markdown document that curates the handful of URLs on your site you most want LLMs to read at inference time.

Keep a stable response_id and expose a provenance bundle at a resolvable URL that follows PROV conventions. This enables auditability and builds trust with AI systems.

Key structured data elements:

JSON-LD schema for all content types

llms.txt pointing to high-value URLs

datePublished and dateModified timestamps

Author credentials and entity relationships

How Do You Measure GEO Success in AI Search?

Tracking AI visibility requires new metrics beyond traditional SEO.

Market leaders average 31% Share of Model across all platforms. The top 3 brands capture 67% of all AI mentions in their category. These benchmarks establish clear targets.

73% of consumers trust AI recommendations, making AI visibility directly tied to purchase decisions. The urgency is clear: traditional search engine volume is predicted to drop 25% by 2026 and 50% by 2028.

16% of US searches now show AI Overviews, more than doubled since March 2025. This coverage will continue expanding.

Core GEO KPIs to track:

Share of Model: Your visibility across AI platforms

Citation Rate: How often your content gets cited

Refresh Velocity: Time from source change to content update

Competitive Citation Gap: Topics where competitors appear and you don't

Conversion from AI Traffic: Revenue attributed to AI referrals

Schema markup correlates with 34% more AI Overview citations. Author credentials show a 0.71 correlation with citations. These technical factors are measurable and actionable.

What Pitfalls and Governance Gaps Should You Avoid?

GEO is not just SEO for chatbots. As one industry analysis notes, "GEO is a broader discipline: it's the method of making your brand, content, products, and facts retrievable, referenceable, and trustworthy inside LLM-based outputs."

Freshness Debt

Batch jobs and nightly exports create stale windows and race conditions. Real-time synchronization eliminates these gaps.

Provenance Gaps

Publish a concise public citation block (titles, URLs, short excerpt, author, date) that is rendered for users and indexed by crawlers. Missing provenance undermines trust signals.

Analytics-Only Trap

Many teams discover that knowing where they're losing to competitors doesn't solve the underlying problem. They still need to manually create optimized content, manually refresh existing content, and manually implement recommendations. Analytics without automation just creates more work.

Content Parity Violations

If AI sees schema data not visible on the rendered page, Google flags it as "Spammy Structured Data." Ensure your structured data reflects actual page content.

Over-reliance on Third-Party Sources

Brand-owned pages often make up only 5-10% of the sources AI uses for answers. Build your own content depth rather than depending on external citations.

Next Steps: Turn Your CMS into an Always-On GEO Engine

The shift to AI search is accelerating. Relixir customers consistently achieve 3-5x increase in AI search mention rate within 2-4 weeks of deployment.

Relixir's proprietary writing model, trained on 100,000+ blogs and real citation data, produces content specifically structured for how LLMs read and cite information. The platform combines autonomous content refresh, GEO analytics, and a headless CMS architecture designed from the ground up for AI visibility.

To start implementing autonomous monthly refresh:

Audit your current content freshness and AI citation rates

Map your knowledge base sources that should trigger refresh

Implement JSON-LD schema across your content library

Set up llms.txt to guide AI crawlers

Configure automated refresh workflows

The window to dominate AI-search-driven revenue is open right now. Companies that establish AI search visibility today will have a significant competitive advantage as the shift from traditional search to AI search accelerates.

Frequently Asked Questions

What is a GEO-native CMS?

A GEO-native CMS is a headless, agentic content system designed for Generative Engine Optimization. It structures information as data entities, uses JSON-LD endpoints, and employs AI agents to generate, refresh, and cache content for AI search engines.

Why is content freshness important for AI search visibility?

Content freshness is crucial because AI search engines prioritize recent content. Fresh content is more likely to be cited by AI, leading to higher visibility and conversion rates. Autonomous monthly refresh ensures your content remains relevant and visible in AI search results.

How does Relixir's CMS differ from traditional platforms like WordPress?

Relixir's CMS is designed for the AI search era, offering built-in GEO automation, AI visibility tracking, and autonomous content refresh. Traditional platforms like WordPress require manual content management and lack the AI-focused features necessary for optimal AI search performance.

What are the benefits of using JSON-LD in a GEO-native CMS?

JSON-LD is used to encode structured data, making it easier for AI systems to understand and cite your content. It enhances AI visibility by providing clear, machine-readable data that aligns with AI search engine requirements.

How can companies measure success in AI search with a GEO-native CMS?

Success can be measured using metrics like Share of Model, Citation Rate, Refresh Velocity, and Conversion from AI Traffic. These metrics help track AI visibility and the effectiveness of content strategies in driving AI search traffic and revenue.

Sources

https://www.netlify.com/blog/durable-cache-quest-for-fast-fresh-content/

https://www.enterprisecms.org/guides/content-synchronization-strategies-for-enterprise-cms

https://storyblok.com/mp/how-to-optimize-for-generative-engine-optimization-with-a-headless-cms

https://www.citedify.com/blog/ai-visibility-measurement-guide-2026

https://strapi.io/blog/generative-engine-optimization-geo-guide

https://relixir.ai/blog/end-to-end-answer-engine-optimization-platforms-complete-guide

https://www.knewsearch.com/blog/ai-visibility-benchmark-report

https://www.contentful.com/blog/geo-playbooks-prepare-content-generative-search/

https://www.digitalapplied.com/blog/schema-markup-ai-generation-guide-2026

https://www.growthmarshal.io/field-notes/how-to-use-endpoints-to-drive-llm-citations

https://relixir.ai/blog/best-tools-to-track-ai-search-citations