Building an Agentic GEO-Native CMS: Cursor-Style Chat for Content Teams

An agentic CMS embeds autonomous AI agents directly into content workflows, enabling natural language editing through chat interfaces while maintaining enterprise governance. These systems treat content as structured data that machines can understand, allowing teams to automate translation, summarization, and SEO directly inside publishing workflows with proper permissions and audit trails.

At a Glance

• Chat-based editing: Replace point-and-click CMS workflows with natural language commands that can update content across entire libraries instantly

• Autonomous agents: Deploy AI teammates that handle translation, summarization, and SEO with roles, permissions, and audit trails on every action

• GEO optimization: Structure content with JSON-LD and entity graphs to improve visibility in ChatGPT, Perplexity, and other AI search engines

• Continuous refresh: Automatically detect and update outdated information to maintain recency signals that LLMs prioritize for citations

• Enterprise governance: Implement brand style packs, policy validators, and approval workflows to ensure AI-generated content meets compliance standards

Buyers no longer type generic keywords into Google. They ask AI assistants hyper-specific questions and expect synthesized, citation-backed answers in seconds. Traditional content management systems were never designed for this paradigm. They require manual publishing, manual refresh cycles, and provide zero visibility into whether your brand appears in AI search results.

An Agentic CMS changes that equation. It embeds autonomous AI agents directly into editorial workflows, enabling teams to read, write, and publish content through natural language chat while maintaining enterprise governance. This guide walks you through how to build or adopt a GEO-native CMS that keeps your content fresh, structured, and visible across ChatGPT, Perplexity, Google AI Overviews, and every other generative engine shaping buyer decisions today.

Why Content Teams Need an Agentic CMS in the GEO Era

An Agentic CMS embeds autonomous AI agents directly into content workflows, enabling them to read, write, and take action on content independently. Unlike bolt-on chatbots, these agents operate within defined permissions and governance rules, handling repetitive tasks like metadata fixes, SEO checks, and language localization without human handoffs.

This matters because Generative Engine Optimization (GEO) is now a distinct discipline focused on optimizing content for AI search engines. GEO targets inclusion and attribution in AI outputs, not merely organic rankings. The shift is substantial: Gartner predicts that traditional search volume will drop 25% by 2026 as AI chatbots capture market share.

"Traditional CMSs were built for scaling editorial teams, not for today's leaner teams under pressure to ship more content, faster."

Content teams face a fundamental mismatch. Legacy platforms excel at storing and displaying content for human visitors but fail at three critical capabilities:

Automated content publishing: AI search engines process millions of queries daily. Manual ideation-to-publish workflows cannot scale to match.

Continuous content refresh: LLMs heavily prioritize recency. Six-month-old statistics get deprioritized or ignored entirely.

AI search visibility: Most teams have no idea whether their brand appears in AI responses or why competitors get cited instead.

Agentic CMS architecture solves these gaps by treating content as structured data that machines can understand, manipulate, and optimize rather than static text waiting to be fetched.

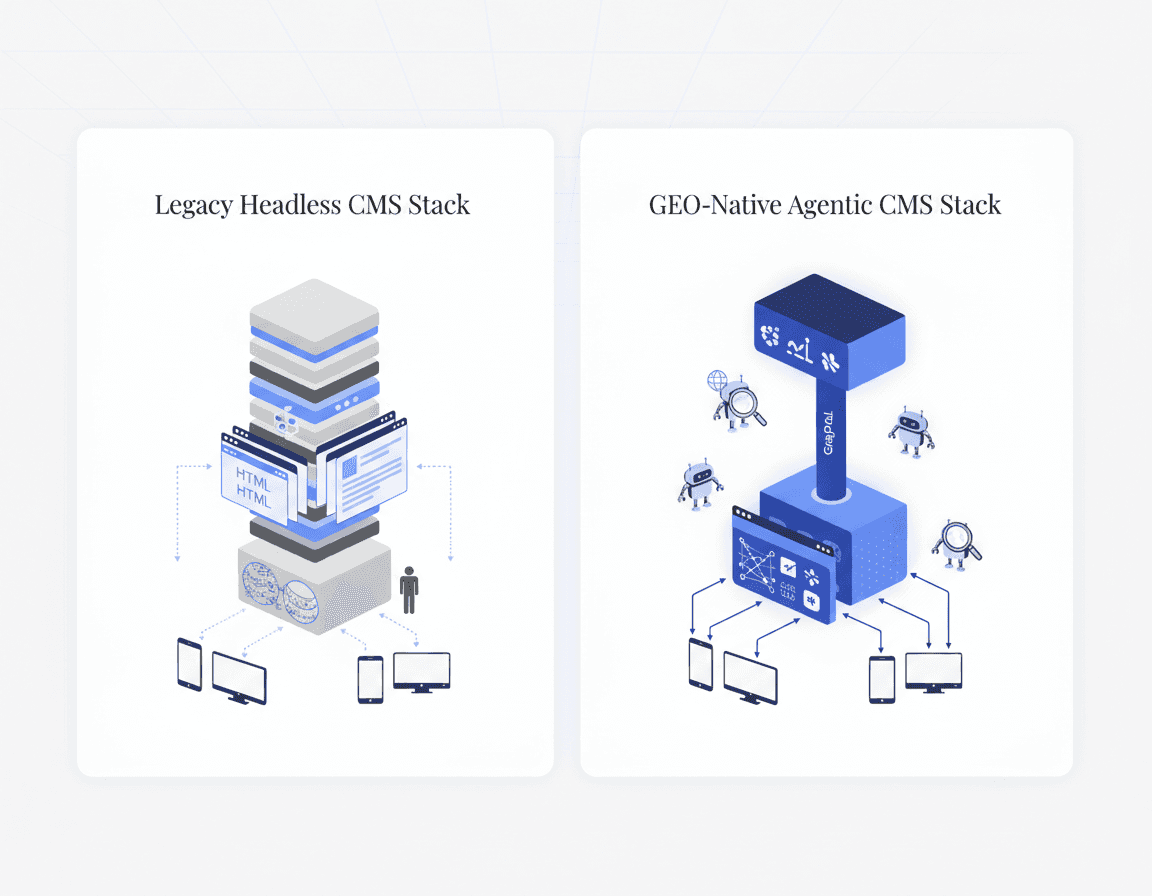

How Does GEO-Native Architecture Move from Headless to Agentic?

The transition from headless to agentic architecture requires rethinking how content is stored, queried, and acted upon. Enterprises spent the last decade decoupling frontends from backends. This solved omnichannel delivery but created a content fragmentation issue where most legacy systems store content as HTML blobs or unstructured strings.

A GEO-native CMS changes this dynamic by treating content as structured data that machines can understand, manipulate, and optimize. Effective AI implementation requires granular content modeling where each piece of content carries explicit metadata about entities, relationships, and attributes.

Structured data serves as the backbone. Structured data is machine-readable information written in a predictable format using a shared vocabulary. JSON-LD has become the most practical format because it does not interfere with visible HTML and is recommended by major search engines.

Why GraphQL Beats REST for Agentic Patterns

GraphQL is inherently better suited for LLMs and agentic patterns than REST because it allows more efficient querying, stronger typing, and real-time adaptability. When AI agents need to fetch specific content fields or traverse relationships between entities, GraphQL's declarative nature means fewer round trips and more precise data retrieval.

This efficiency compounds when agents handle tasks autonomously. Rather than making multiple REST calls to assemble context, a single GraphQL query can retrieve exactly what the agent needs to update metadata, generate translations, or optimize for SEO.

Key takeaway: GEO-native architecture treats content as queryable, structured data where agents can traverse relationships and take action through schema-aware operations.

What Makes Cursor-Style Chat a Content Velocity Engine?

Content velocity is the speed at which teams can ideate, create, and deliver content that drives business outcomes. Cursor-style chat interfaces replace point-and-click CMS editing with natural language prompts, collapsing multi-screen workflows into single commands.

Cursor Chat lets you ask questions or solve problems by describing what you want in natural language. The system automatically provides context from your entire content library, documentation, and web sources, eliminating manual copy-pasting between tools.

Consider the workflow difference. Traditional CMS editing requires navigating to each post, opening the editor, finding the field, making changes, and saving. With chat-based editing, typing "Change all mentions of our old pricing from $99 to $149" instantly propagates that change across every relevant piece of content.

Teams using structured, chat-first workflows report dramatic efficiency gains. Vanguard achieved 6x to 10x more efficient website creation with reusable components while increasing quality engagement by 176% through personalization.

"The speed of execution becomes everything. How quickly you can go from idea to outcome massively depends on the toolchain and processes you have in place."

Typical Workflow: From Prompt to Published Update

A chat-based editing workflow follows a predictable pattern:

User prompt: User messages contain the text you type along with the context you've referenced, whether that's a specific page, content collection, or global instruction.

Context assembly: The system gathers relevant content across your CMS, pulling in schema definitions, existing values, and related entries.

AI processing: The model interprets intent and generates proposed changes, respecting your content model and governance rules.

Review and apply: Changes appear as inline diffs with accept/revert controls. Human approval gates high-risk modifications.

Propagation: Accepted changes cascade through linked content, updating references, translations, and dependent fields automatically.

This pattern transforms content operations from sequential editing to declarative intent. Teams describe outcomes rather than clicking through procedures.

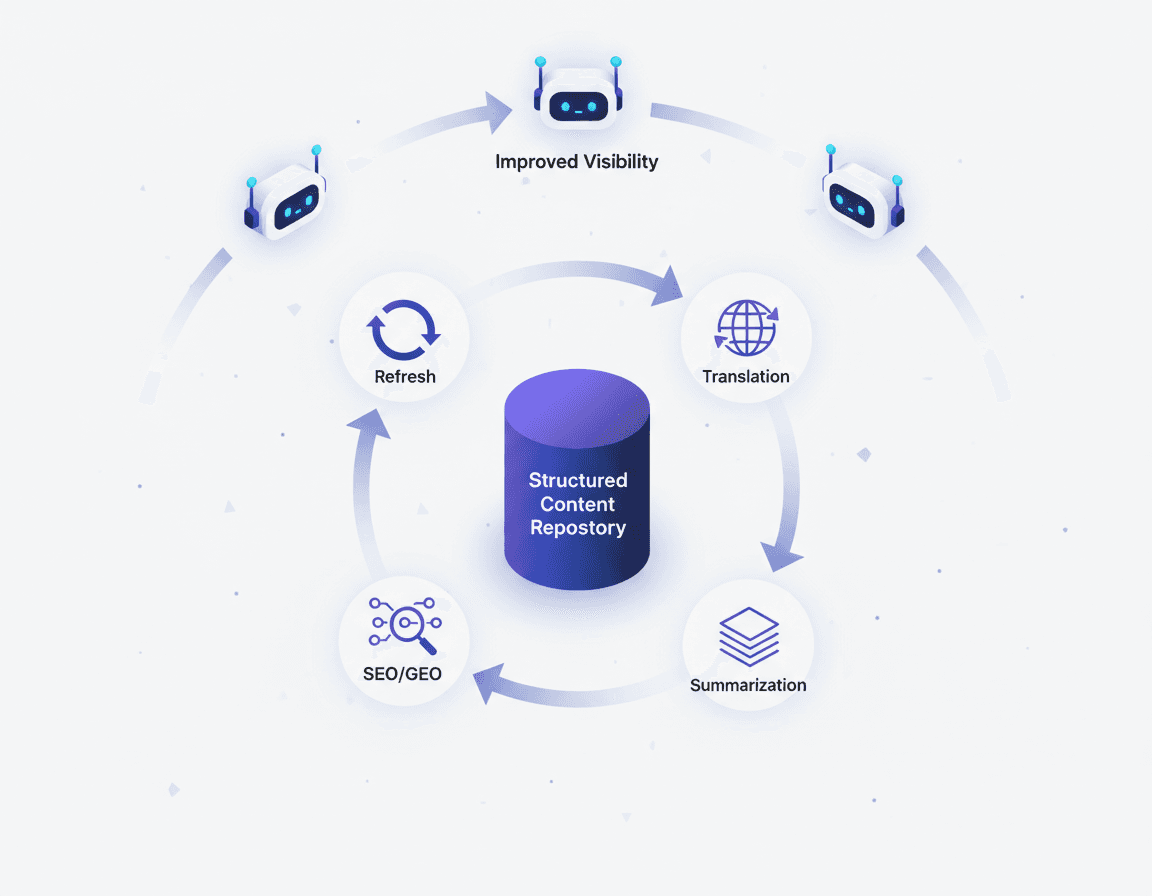

How Do AI Agents Drive Autonomous Refresh, Translation & GEO?

AI agents automate translation, summarization, and SEO directly inside publishing workflows. With roles, permissions, and audit trails on every action, these agents function as autonomous teammates that respect existing governance structures.

The business case is clear. Most teams still treat SEO content as "evergreen" assets, but automated content refreshing is quickly becoming the only reliable way to keep pages accurate, competitive, and visible inside AI-driven experiences.

Relixir's autonomous refresh capability demonstrates this approach. The platform continuously scans content libraries for outdated information, automatically syncs with knowledge bases, and refreshes content to maintain rankings. Relixir-generated blogs get cited 3x more often in AI search than traditional blogs.

Agent Type | Function | GEO Impact |

|---|---|---|

Translation Agent | Localizes entries into selected locales as a workflow step | Expands visibility across multilingual AI queries |

Summarization Agent | Creates concise summaries from long-form content | Provides quotable snippets LLMs can extract |

SEO/GEO Agent | Runs pre-publish checks and surfaces recommendations | Ensures structural elements for AI citation |

Refresh Agent | Detects outdated information and updates automatically | Maintains recency signals that LLMs prioritize |

Continuous Refresh Signals for AI Overviews

URL freshness directly impacts citation likelihood. Research shows that URLs updated within the last 30 days are 2.5 times more likely to be cited by LLMs compared to those older than 90 days.

Generative systems infer freshness from multiple signals:

Last-modified dates and schema timestamps

Recency of statistics and examples in the copy

Technical markers like sitemap lastmod values

Textual cues mentioning current timeframes

Continuous updating also creates more frequent "recrawl hooks" for search engines, reinforcing that your content is the best place to source fresh facts for a given topic cluster.

Why Model Content with JSON-LD & Entity Graphs for LLMs?

Generative engines rely on structured signals for LLMs to understand, segment, and reuse your content. Without explicit schema markup, LLMs must infer relationships and attributes, increasing the chance they'll cite competitors with clearer structured data.

Schema.org provides the shared vocabulary, defining types like Article, Product, FAQPage, and LocalBusiness along with their supported properties. JSON-LD is the most widely used format because it does not interfere with visible HTML and works seamlessly with major search engines.

Structured data improves how LLMs interpret, retrieve, and reuse information in three ways:

Reduces ambiguity: Explicit entity definitions tell models exactly what your content describes

Improves retrieval quality: Structured queries return more relevant content chunks

Strengthens SEO-to-AI connection: Schema signals align with user intent across both traditional and AI search

Implementation requires attention to rendering. Although Gemini and CoPilot use crawlers that can render JavaScript, other major LLM platforms like ChatGPT and Claude use crawlers that do not have this capability. Server-side rendering ensures visibility across all platforms.

How to Govern AI Content: Compliance, Safety & Brand Integrity

CMS vendors are innovating with AI interfaces, visual builders, and personalization features, but these capabilities create new governance requirements. Legal and compliance teams often block AI initiatives because they fear unchecked publication of machine-generated errors.

The threat landscape is real. Sift reported a 22% increase in blocked content in Q1 2024 versus Q1 2023, while 76% of fraud and risk professionals believe their business has been targeted by AI-generated fraud.

Effective AI content governance requires multiple control layers:

Component | Purpose | Implementation |

|---|---|---|

Brand Style Pack | Teaches agents how to write like you | Versioned knowledge base with voice, tone, banned terms |

Policy Validators | Blocks off-brand or risky content | Automated checks for tone, inclusivity, claims |

Approvals & Audit | Adds human judgment to high-risk outputs | Reviewers, tiers, change logs, rollback capabilities |

Scorecard & Feedback | Continuous improvement loop | Adherence metrics; train from accepts |

Adobe Experience Manager's Governance Agent enforces security, regulatory, and brand policies to ensure every interaction adheres to established standards. It validates content against brand guidelines while managing permissions and digital rights across content ecosystems.

"Agentic AI doesn't need blind trust; it needs earned trust built through visibility, constraints, and feedback loops."

Which Metrics Prove Agentic CMS Visibility & Revenue Impact?

AI visibility is becoming its own performance channel, one that signals which brands are trusted enough to enter the answer. The metrics differ fundamentally from traditional SEO tracking.

The investment trajectory is clear: 97% of digital leaders reported positive impact from AEO/GEO in 2025, and 94% plan to increase investments in 2026. Among CMOs surveyed, 56% made significant investments in AEO/GEO strategies.

AI referral traffic accounts for 1.08% of all website traffic across key industries, growing approximately 1% month-over-month. ChatGPT dominates as the primary source, accounting for 87.4% of AI referral traffic.

Metric | Definition | Target |

|---|---|---|

AI Mention Rate | Percentage of relevant queries where your brand appears | Increasing trend |

Citation Rate | How often AI search engines cite your content as a source | 3x improvement with optimized content |

Share of Voice | Your visibility relative to competitors for specific queries | Category leadership |

URL Freshness | How recently cited pages were published | Within 30 days |

Content Health Score | Aggregated signal across traffic, rankings, and AI inclusion | Prioritize updates |

"Velocity isn't about producing more; it's about making content better, faster, and safer."

Relixir vs Traditional CMS: Choosing Your GEO Stack

Traditional CMS platforms like Webflow, WordPress, and Contentful were built for 2000s-era SEO. They require manual content publishing, manual refresh cycles, and provide zero visibility into AI search results. Building AI features into legacy systems is often a non-starter due to rigid monolithic architectures.

Relixir takes a different approach as a GEO-native CMS. The platform provides a headless CMS with built-in AI agents that autonomously generate and refresh content optimized for LLM citations. Its proprietary writing model trained on 100,000+ blogs produces content specifically structured for how LLMs read and cite information.

Capability | Traditional CMS | Relixir |

|---|---|---|

Content Publishing | Manual | AI agent-assisted with chat interface |

Content Refresh | Manual audit cycles | Autonomous, syncs with knowledge base |

AI Search Visibility | None | Full-suite analytics across ChatGPT, Perplexity, Claude |

Structured Data | Developer-dependent | Automated JSON-LD, FAQ sections, entity signals |

Citation Optimization | Not addressed | 3x higher citation rate than traditional blogs |

Deployment | Single architecture | Hosted, headless, or CMS wrapper options |

Customers using Relixir achieve 3-5x increase in AI search mention rate within 2-4 weeks of deployment, with many early-stage startups relying on the platform as their sole inbound motion.

Key Takeaways & Next Steps

The window to dominate AI-search-driven revenue is open now. LLMs increasingly pull from domain-specific content over third-party sources. Your blog is becoming the citation engine for AI search.

Core principles for building your agentic GEO-native CMS:

Treat content as structured data with explicit schema markup

Replace point-and-click editing with chat-based natural language workflows

Deploy autonomous agents for refresh, translation, and GEO optimization

Implement governance controls that build earned trust through visibility and constraints

Measure AI-specific metrics: citation rate, mention rate, URL freshness

Relixir's vision is to build the new standard content database for AI search. Backed by Y Combinator and serving 400+ B2B companies including Rippling, Airwallex, and HackerRank, the platform offers the complete infrastructure for content teams serious about AI search visibility.

Companies that establish AI search visibility today will have a significant competitive advantage as the shift from traditional search to AI search accelerates. Visit Relixir.ai to learn how we can help you dominate AI search for your category.

Frequently Asked Questions

What is an agentic GEO-native CMS?

An agentic GEO-native CMS integrates autonomous AI agents into content workflows, enabling teams to manage content through natural language chat, ensuring content is fresh, structured, and visible across AI search engines like ChatGPT and Google AI Overviews.

How does a GEO-native CMS differ from traditional CMS platforms?

A GEO-native CMS treats content as structured data optimized for AI search engines, unlike traditional CMS platforms that focus on manual content publishing and lack AI search visibility. It uses AI agents for automated content refresh and optimization.

Why is structured data important in a GEO-native CMS?

Structured data, such as JSON-LD, is crucial in a GEO-native CMS because it provides machine-readable information that helps AI search engines understand, retrieve, and cite content accurately, improving AI search visibility and citation rates.

How does cursor-style chat improve content velocity?

Cursor-style chat allows content teams to use natural language prompts to manage content, significantly speeding up the ideation, creation, and delivery process by collapsing multi-step workflows into single commands, enhancing efficiency and output.

What role do AI agents play in content management?

AI agents automate tasks like content refresh, translation, and SEO optimization within a CMS, ensuring content remains current and competitive in AI-driven search environments, while respecting governance and compliance standards.

How does Relixir's CMS enhance AI search visibility?

Relixir's CMS enhances AI search visibility by using AI agents to autonomously generate and refresh content optimized for LLM citations, providing full-suite analytics for AI search performance, and ensuring content is structured for AI citation.

Sources

https://solvedbycode.ai/blog/complete-guide-generative-engine-optimization-geo-2026

https://www.llmcms.org/guides/ai-enhanced-cms-vs-traditional-headless-cms

https://business.adobe.com/customer-success-stories/vanguard-case-study.html

https://searchatlas.com/research/url-freshness-in-llm-generated-answers/

https://www.forrester.com/report/the-forrester-wave-tm-content-management-systems-q1-2025/RES182113

https://sift.com/resources/ebooks-reports/q2-2024-digital-trust-safety-index

https://www.conductor.com/academy/aeo-geo-benchmarks-report/