Can AI Penalize Outdated Content?

AI search engines actively penalize outdated content, with 68% of AI Overview citations coming from content updated within the last 12 months. Pages not refreshed quarterly are three times more likely to lose AI citations, creating a visibility cliff that arrives faster than most teams expect.

TLDR

• AI assistants cite content that's 25.7% fresher than traditional search results, with average citation age of 1,064 days versus 1,432 days for organic SERPs

• Content updated within 30 days shows significantly higher AI answer visibility, while pages over 4 years old receive only 3.6% of AI-driven traffic

• AI systems automatically add the current year to 28.1% of queries, creating an invisible recency filter

• Pages with substantial updates (500+ words) see 43% higher AI Overview visibility compared to unchanged content

• Technical signals like Schema markup, XML sitemaps, and last-modified headers directly influence AI citation frequency

• Refresh ROI data shows 300% increases in AI traffic and 3x improvements in organic visibility for actively maintained content

Is AI starting to penalize outdated content? Our guide unpacks how modern answer engines weigh freshness, what triggers demotions, and which tactics keep pages in the citation set.

Is AI penalizing outdated content in 2026?

The short answer is yes. Large-scale studies confirm that AI assistants actively prefer fresher sources when generating answers.

An Ahrefs analysis of 17 million citations found that "AI assistants prefer citing fresher content." The average age of URLs cited by AI assistants is 1,064 days, compared to 1,432 days for URLs in organic SERPs, making AI citations roughly 25.7% fresher.

This shift matters because half of consumers now use AI-powered search, and that figure is projected to influence $750 billion in revenue by 2028.

About 50% of Google searches already include AI summaries, a number expected to exceed 75% by 2028.

The rise of AI-driven search engines like ChatGPT and Google AI Overviews is fundamentally changing how content gets discovered and consumed. These platforms prioritize content that is recent and relevant to the user's query.

Perhaps most telling: 68% of AI Overview citations come from content updated within the last 12 months. If your pages haven't been touched in over a year, you're competing at a significant disadvantage.

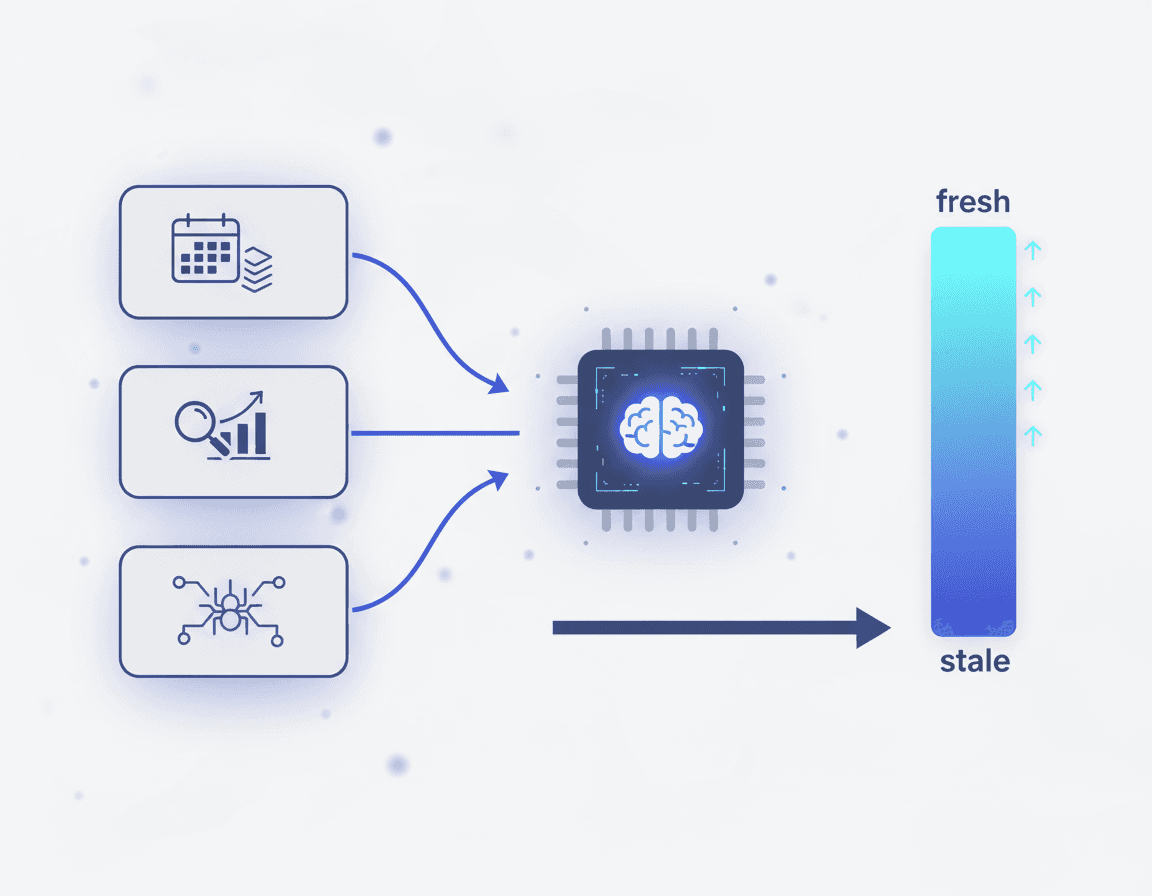

How do AI models measure content recency and authority?

AI models evaluate freshness through a combination of explicit signals and implicit cues that determine whether to prioritize older authoritative content or newer updates.

LLM content freshness signals are "the textual, technical, and behavioral cues that hint at when information was last updated and how trustworthy it is for time-sensitive questions."

These signals fall into distinct categories:

Explicit date signals: Publication dates, modification timestamps, and Schema.org markup for

datePublishedanddateModifiedImplicit freshness indicators: References to current events, recent statistics, and citations from the current year

Behavioral cues: Crawl frequency, indexing patterns, and user engagement metrics

Different platforms weight these signals differently. Perplexity cites approximately 2.8x more sources per query than ChatGPT (averaging 21+ citations vs ~8), and those sources tend to be more recent. This is because Perplexity performs real-time web searches and often favors recent sources for time-sensitive topics.

Interestingly, AI systems automatically add the current year into 28.1% of sub-queries even when users don't include it in their original prompt. This built-in recency bias means your content competes against an invisible year filter.

URL freshness measures how recently a cited page was published relative to the moment the model produced the response. When web access is enabled, models pull recently published pages into answers. When disabled, they fall back to older material rooted in training data.

Key takeaway: Recently-updated content from high-authority domains outperforms both old authoritative content and new low-authority content.

When does stale content drop out of AI answers?

The visibility cliff arrives faster than most content teams expect.

SearchAtlas research found that URLs updated within the last 30 days are more likely to appear in AI-generated answers. As the researchers noted, "Outdated content is often penalized by AI search engines, leading to decreased visibility."

The data gets more specific when examining traffic patterns. A Seer Interactive case study revealed that over 80% of AI-driven traffic went to pages updated within the last two years, while only 3.6% went to pages over four years old.

AirOps research quantified the penalty: "Pages that teams fail to refresh at least once per quarter are three times more likely to lose AI citations over time."

The freshness threshold varies by query type:

Query Type | Freshness Requirement |

|---|---|

Breaking news | Hours to days |

Product comparisons | 30-90 days |

Industry statistics | Quarterly |

Definitional content | 6-12 months |

Evergreen guides | Annual review |

Pages updated with substantial additions (500+ new words of meaningful content) saw 43% higher AI Overview visibility compared to unchanged content.

Which freshness levers can marketers actually control?

Marketers have direct influence over both the textual cues embedded in content and the technical infrastructure that signals recency to AI systems.

Textual & on-page cues

Textual cues live entirely inside the content and are visible to both crawlers and models. These include:

Time-bounded statements: "As of February 2026" rather than vague temporal references

Current statistics: Replace outdated numbers with recent data points

Recent citations: Pages citing sources from the current year tend to appear at earlier citation positions (typically positions 3-5) versus pages with only older references (positions 6-8)

Updated examples: Swap dated case studies for current ones

Fresh screenshots: Replace outdated UI images with current versions

AirOps recommends using the exact user question as a subheading, then answering it in the first sentence. This structure helps AI extract and cite your content efficiently.

Technical & metadata cues

Google explicitly recommends using both datePublished (original) and dateModified (last substantive update) in your Schema.org markup.

Technical signals that influence crawl frequency and AI visibility include:

XML sitemap updates: Signal content changes to crawlers

RSS feed maintenance: Provide structured update notifications

Last-modified headers: HTTP signals that indicate page freshness

Canonical tags: Consolidate authority to your preferred URL version

Continuous updating creates more frequent "recrawl hooks" for engines, reinforcing that your content is the best place to source fresh facts for a given topic cluster.

81% of AI-cited pages use schema markup. While schema alone doesn't guarantee citations, its absence almost guarantees you won't get them.

Why move from manual SEO to autonomous GEO refresh?

Manual content refresh cycles simply cannot keep pace with AI's freshness demands.

Most enterprises benefit from a quarterly "evergreen sprint" for high-value topics, with weekly micro-updates for volatile data. But executing this manually across hundreds or thousands of pages overwhelms most content teams.

Relixir's autonomous refresh capability continuously scans entire content libraries for outdated information, automatically syncing with knowledge bases to maintain accuracy. This eliminates the manual burden that traditional CMS platforms require.

Consider the Deloitte 2025 program: a global consumer-electronics firm automated inventorying outdated passages, drafted updates with a fine-tuned GPT-4o workflow, and applied passage-level AEO/GEO structuring. Within two quarters, the refreshed corpus reclaimed first-page placement for 68% of target queries, reduced bounce rate by 28%, and cut support-driven call volume 14%, modeling a 3.1x content-ROI uplift.

The challenge with traditional CMS platforms is architectural. As Storyblok notes, "GEO isn't a 'set it and forget it' game. AI systems reward content that's up-to-date, consistent, and reinforced by multiple trusted mentions."

A survey of 321 B2B SaaS content teams reveals that quality matters more than quantity after reaching baseline output. The median team publishes 11-20 blog posts per quarter, but maintaining freshness across that volume requires systematic automation.

The Digital Bloom's 2025 AI Citation Report confirms that content specifically structured for how LLMs read and cite information gets cited 3x more often in AI search than traditional blogs.

Key takeaway: Generative engine optimization is the discipline of designing and maintaining content so that large language models and answer engines can accurately retrieve, summarize, and cite it.

How can structure & schema make freshness explicit?

Structured data and dedicated endpoints transform your freshness signals from implicit suggestions into explicit declarations that AI systems can parse instantly.

81% of AI-cited pages use schema markup, and pages with structured data are up to 40% more likely to appear in AI summaries and citation positions.

The most citation-worthy schema types include:

FAQPage: Consistently demonstrates the highest citation probability

Article: With

datePublishedanddateModifiedpropertiesOrganization: Establishes entity authority

HowTo: Captures procedural queries

Product: Essential for commercial content

However, SearchAtlas research offers an important caveat: "The findings show that higher schema coverage does not result in higher visibility within LLM responses." Content depth and informational quality emerge as stronger drivers of LLM visibility than technical enhancements alone.

The solution isn't more schema but smarter schema. Growth Marshal recommends publishing dedicated JSON-LD endpoints. "LLMs and Google alike reward instant ingestion. Your 2kB fact file will outrank competitors' bloated CMS content with zero backlinks or ad spend."

A study of 39,876 websites found that schema markup (+150.5%) and missing headings (+114.3%) topped the list of optimization impacts, followed by title tag (+68.4%) and canonical link (+63.9%).

What do the numbers say about refresh ROI?

The evidence for content refresh ROI is compelling and consistent across multiple studies.

Seer Interactive's case study documented dramatic results: refreshing outdated content led to a 300% increase in AI traffic for one SaaS HR client and a 54% increase in GPT-User bot hits for a travel aggregator.

Deloitte's evergreen sprint program demonstrated that a disciplined 90-day refresh cycle reclaimed visibility while sustaining a 3.1x modeled content ROI over two quarters.

Semrush's technical SEO study found that top-cited pages demonstrate higher visit duration, lower bounce rates, and better conversion metrics across all traffic sources. This correlation suggests AI visibility and user engagement reinforce each other.

A Boston Consulting Group analysis of expert-led, AI-powered content processes showed:

5x increase in content production

6x improvement in targeted keywords indexed by Google

300% improvement in non-branded keywords occupying Position 0-10

3x improvement in organic traffic from new articles

Meanwhile, two-thirds of AI-only content failed to index by Google entirely, proving that automation without expertise produces diminishing returns.

Keep content living or watch AI bury it

The data is clear: AI search engines reward fresh, well-structured content and increasingly ignore pages that haven't been updated. With 68% of AI Overview citations coming from content updated within the last 12 months, and pages not refreshed quarterly being 3x more likely to lose citations, content freshness has become a non-negotiable ranking factor.

The challenge is scale. Manual refresh cycles cannot keep pace with AI's freshness demands across a comprehensive content library. Traditional CMS platforms were built for a different era, requiring human effort for every content update.

Relixir's autonomous refresh capability continuously scans entire content libraries for outdated information, automatically syncing with knowledge bases to maintain accuracy. Relixir customers consistently achieve 3-5x increases in AI search mention rate within 2-4 weeks of deployment.

For companies serious about AI search visibility, the path forward requires moving from reactive, manual content maintenance to proactive, automated freshness management. The window to establish AI search dominance is open now. Those who build systematic refresh capabilities today will compound their advantage as AI search continues to capture market share from traditional channels.

Relixir solves this by providing a headless CMS with built-in AI agents that autonomously generate and refresh content optimized for LLM citations. The platform enables companies to create any content collection and then generate and refresh unlimited items within those collections, ensuring AI search engines always see your most current, accurate information.

Frequently Asked Questions

Does AI penalize outdated content?

Yes, AI search engines tend to prefer fresher content. Studies show that AI assistants cite newer sources more frequently than older ones, impacting visibility for outdated content.

How do AI models determine content freshness?

AI models use explicit signals like publication dates and implicit cues such as recent statistics and current event references to assess content freshness. These factors influence how AI prioritizes content in search results.

What are the consequences of not updating content regularly?

Failing to update content can lead to decreased visibility in AI-generated answers. Research indicates that pages not refreshed quarterly are three times more likely to lose AI citations over time.

How can marketers ensure their content remains fresh for AI search engines?

Marketers can maintain content freshness by updating textual cues, such as statistics and examples, and using technical signals like XML sitemaps and schema markup to indicate recency to AI systems.

What role does Relixir play in content freshness?

Relixir provides a headless CMS with AI agents that autonomously refresh content, ensuring it remains up-to-date and optimized for AI search engines, thus maintaining visibility and accuracy.

Sources

https://ahrefs.com/blog/do-ai-assistants-prefer-to-cite-fresh-content/

https://www.qwairy.co/blog/content-freshness-ai-citations-guide

https://www.forrester.com/blogs/shifting-search-behaviors-demand-smarter-content-strategies/

https://searchatlas.com/blog/url-freshness-in-llm-responses-search-enabled-vs-disabled-analysis/

https://searchatlas.com/research/url-freshness-in-llm-generated-answers/

https://www.genrank.co/blog/json-ld-schema-the-secret-language-ai-engines-understand

https://www.singlegrain.com/geo/content-refresh-cycles-for-ai-driven-content/

https://storyblok.com/mp/how-to-optimize-for-generative-engine-optimization-with-a-headless-cms

https://thedigitalbloom.com/learn/2025-ai-citation-llm-visibility-report/

https://searchatlas.com/blog/limits-of-schema-markup-for-ai-search/

https://www.growthmarshal.io/field-notes/how-to-use-endpoints-to-drive-llm-citations

https://www.semrush.com/blog/technical-seo-impact-on-ai-search-study/