Content Refreshing Best Practices [February 2026]

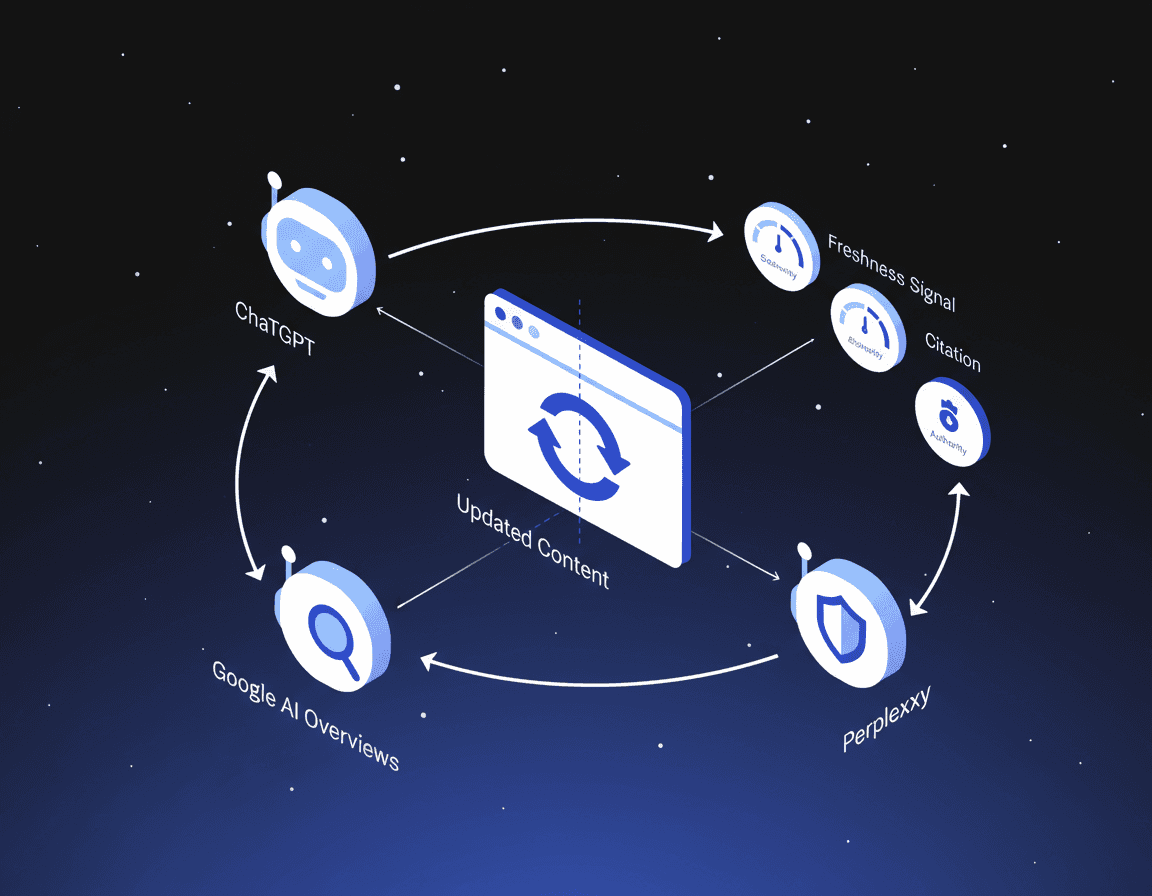

Content refreshing in 2026 means continuously updating your content to stay visible in AI search engines like ChatGPT, Perplexity, and Google AI Overviews. AI systems automatically add "2026" to 28.1% of queries, filtering out outdated content before it can compete. Building automated refresh systems that detect decay, prioritize updates, and track AI visibility metrics is now essential for maintaining search presence.

TLDR

AI search engines now serve billions of users weekly, with 55% of global organizations adopting generative AI within one year of ChatGPT's release

Fresh content gets cited 30% more often by AI systems, with recently updated pages from authoritative domains outperforming static "evergreen" content

Perplexity cites 2.8x more sources per query than ChatGPT, creating more opportunities for fresh, comprehensive content to appear

Successful refresh systems require four layers: detection, prioritization, execution, and monitoring working together continuously

Schema markup with proper dateModified fields and structured data increases AI citation likelihood by 2-4x

Content refresh best practices have shifted dramatically in 2026 as AI search engines dominate how buyers discover products and solutions. The old playbook of treating blog posts as static "evergreen" assets no longer works when ChatGPT, Perplexity, Claude, and Google AI Overviews are assembling answers by reading, summarizing, and cross-checking multiple sources in real time.

This guide breaks down exactly how to keep your content fresh, structured, and visible to AI search engines in February 2026.

Why 2026 Requires New Content Refresh Playbooks

The shift to AI-powered search is not a future trend. It is already here.

Google's AI Overviews reach 2 billion users. ChatGPT serves 800 million users each week. Perplexity processed 780 million queries in a single month. These numbers represent a fundamental transformation in how buyers research and evaluate solutions.

Most teams still treat big SEO pieces as "evergreen" assets, but automated content refreshing is quickly becoming the only reliable way to keep those pages accurate, competitive, and visible inside AI-driven experiences.

AI Overviews and other generative features do not just list links. They assemble direct answers by reading, summarizing, and cross-checking multiple sources in real time. This means your content competes not just for clicks but for citations.

The adoption curve backs this up. According to McKinsey, 55% of global organizations adopted generative AI in at least one business function less than one year after ChatGPT's release. Brands that refresh continuously keep showing up where AI assembles answers.

How AI Search Engines Judge Freshness & Authority

Different AI providers evaluate content freshness through distinct mechanisms, and understanding these differences is critical for optimization.

Freshness Sensitivity by Platform

AI systems automatically add the current year ("2026") into 28.1% of sub-queries even when users did not include it in their original prompt. This built-in recency bias means outdated content gets filtered out before it can compete.

A study of over 1,000 AI-generated responses found that URLs with recent updates were cited 30% more often than those without. The freshness of a URL significantly impacts the relevance and accuracy of LLM-generated answers.

Key platform differences:

Perplexity cites approximately 2.8x more sources per query than ChatGPT (averaging 21+ citations vs ~8), and those sources tend to be more recent

ChatGPT specifically benefits from Wikipedia presence, which provides positioning benefits that other providers do not offer

Google AI Overviews inherit freshness signals from Google's Query Deserves Freshness (QDF) algorithm

Common Freshness Mistakes

One critical pitfall: changing dates without substance. A case study found that date manipulation without substantive content changes gets flagged and penalized.

Recently-updated content from high-authority domains outperforms both old authoritative content and new low-authority content. The winning combination is freshness plus authority.

How Do You Build an Automated Content Refresh System?

A resilient system includes four layers working together: detection (spotting decay), prioritization (deciding what matters most), execution (making precise updates), and monitoring (measuring impact and feeding results back into the loop).

Content decay moves faster than most teams can track. A page can slide from position three to position twelve in weeks, long before quarterly audits catch the drop. Only 30% of brands maintain visibility from one AI-run to the next.

The most scalable systems follow a repeatable, seven-step loop that runs continuously, even as you ship new content.

AI-Driven Scoring & Prioritization

AI content scoring gives you an objective way to rank every page on your site by how badly it needs a refresh and how much upside that refresh could generate.

The P.R.I.O.R.I.T.Y. framework provides a simple way to quantify which posts should be refreshed first by blending performance signals, business value, and effort.

Key scoring dimensions:

Intent alignment: Does content match current search intent?

Topical depth: Are entities and coverage gaps addressed?

User engagement: What do bounce rates and time-on-page indicate?

Content age: How long since last substantive update?

AI visibility: Is the page currently cited in AI responses?

The data supports this approach: 61% of enterprise content assets lose visibility by more than 40% within 14 months of publication due to unmonitored quality decay.

Agentic Workflows: Humans in the Loop

Agentic SEO is the deployment of AI agents, powered by large language models like ChatGPT, Claude, and Gemini, to autonomously execute complex SEO workflows, in combination with human oversight and validation.

The most successful agentic SEO implementations operate on the "human in the loop" principle, a collaboration model where AI handles the grunt work while human expertise guides strategy and validates output.

AI agents turn refresh into a continuous system that protects rankings, surfaces the right pages to update, and prepares improvements before performance slips. They connect to analytics through APIs and track ranking shifts, traffic trends, engagement drops, and click-through rate changes.

Statistics age fast. More than 70% of pages cited by AI were updated in the past twelve months, making a one-year refresh baseline the minimum standard for staying visible.

Key takeaway: Build systems where AI accelerates research and drafting while humans lock in E-E-A-T and originality.

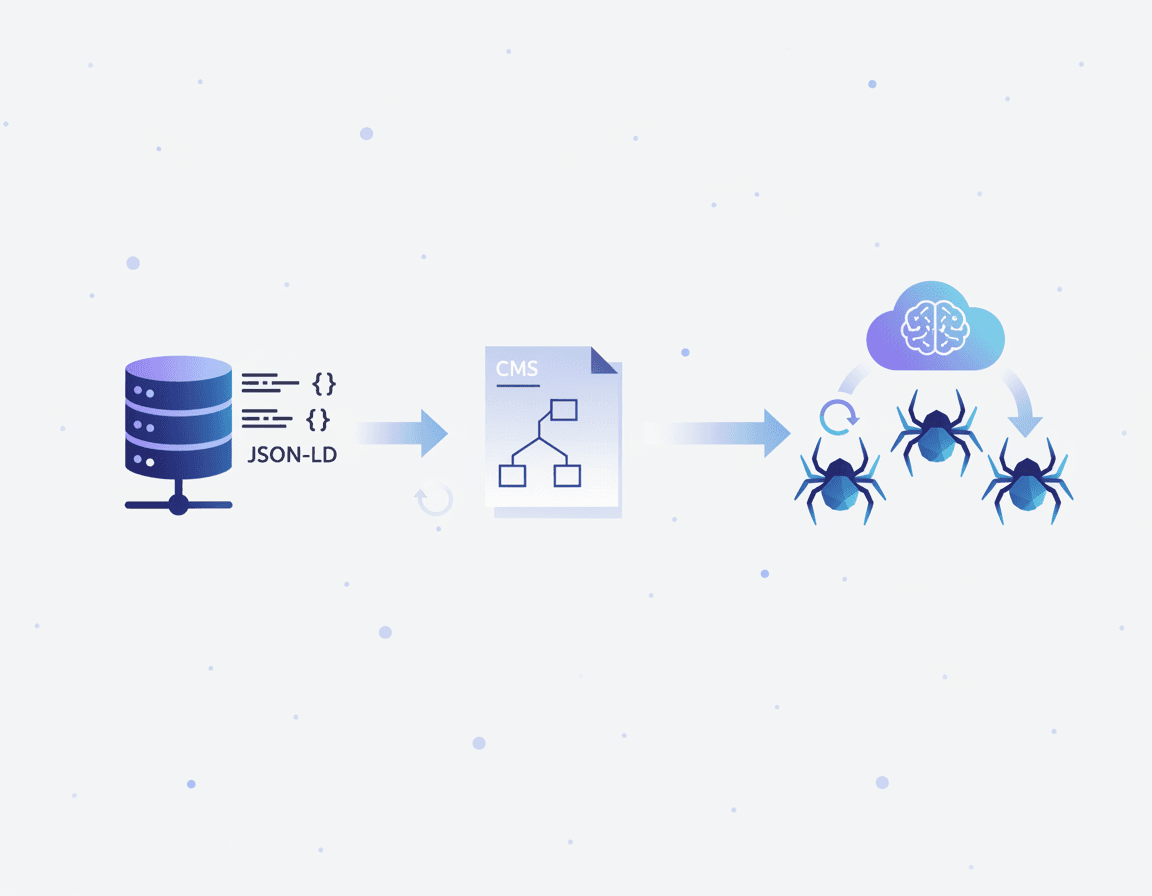

Technical Signals: Schema, JSON-LD & Recrawl Hooks

Machine-readable signals tell AI systems exactly when your content was updated and what it covers.

Schema Markup Essentials

Pages with valid schema markup are 2-4x more likely to appear in Google's AI Overviews and featured snippets. JSON-LD is the dominant format in 2026, and Google explicitly recommends it.

Google explicitly recommends using both datePublished (original) and dateModified (last substantive update) in your Schema.org markup. This machine-readable freshness signal helps AI systems verify recency.

Critical schema implementations:

Article schema with accurate

datePublishedanddateModifiedFAQ schema that matches how users query AI assistants

HowTo schema for tutorial content

Product schema for e-commerce pages

JSON-LD Endpoints

Publishing a dedicated JSON-LD endpoint, such as /facts.jsonld or /schema, outputs a machine-readable document unpolluted by CSS cruft or marketing scripts. LLMs and Google alike reward instant ingestion of clean, structured data.

Sitemap-Based Refresh

Sitemaps trigger systematic recrawls. According to Google Cloud documentation:

Daily refresh of any added, deleted, and updated URLs to the sitemap

Periodic refresh of unchanged URLs every 14 days

A single sitemap can contain a maximum of 50,000 URLs

Continuous updating also creates more frequent "recrawl hooks" for engines, reinforcing that your content is the best place to source fresh facts, entities, and examples.

What's the Operational Playbook for 1,000+-Page Libraries?

Once a site crosses 1,000 indexed URLs, "just publish more" stops working. You need a structured framework.

Tiered Maintenance Calendar

Tier | Review Frequency | Content Type | Owner |

|---|---|---|---|

Tier 1 | Monthly | Product pages, pricing, top-of-funnel assets | Content lead |

Tier 2 | Quarterly | Category pages, comparison content | SEO team |

Tier 3 | Bi-annually | Evergreen guides, foundational content | Subject matter experts |

Tier 4 | Annually | Archive content, low-traffic pages | Automated scan |

A maintenance calendar brings discipline by spelling out how often different page tiers must be reviewed and who is on the hook for each tier.

Essential Audit Tooling

Content audit tools gather your site's pages and performance data into one view so you can see what to keep, update, merge, or remove. Look for tools that offer:

Fast crawling with rich metrics

AI citation tracking capabilities

Integration with existing analytics platforms

Collaboration features for distributed teams

The most scalable systems follow a repeatable, seven-step loop that runs continuously. This operational discipline separates teams that maintain visibility from those who lose ground.

Why Do External Trust & Citation Signals Like E-E-A-T Boost AI Visibility?

Trust signals are elements on a website that help build credibility and trust with visitors. Examples include customer testimonials, security badges, and professional design. For AI systems, these signals determine citation confidence.

E-E-A-T for AI Citations

Incorporating human elements, such as author bios and personal stories, can enhance trust in AI-generated content. AI-generated content can sometimes lack the human touch that builds trust with readers, so deliberate E-E-A-T signals matter.

Third-Party Corroboration

Reddit is now the most cited domain by AI platforms at approximately 40%. This matters because AI systems weight content that appears across multiple authoritative sources.

Content with tables and lists gets cited 2.5x more than wall-of-text prose. The Princeton study proved citations, statistics, and quotes boost visibility 30-40%.

Formatting for AI Extraction

AI-readable writing style prioritizes clear formatting patterns:

Answer-first structures (BLUF)

Short paragraphs (2-3 sentences max)

Question-based headers

Structured lists and numbered steps

Comparison tables

Evidence blocks with citations

76.4% of ChatGPT's most-cited pages were updated within the last 30 days. Freshness plus structure equals visibility.

Measuring Success: From AI Visibility to Revenue Uplift

Tracking the right metrics separates successful refresh programs from wasted effort.

Key Performance Indicators

Metric | What It Measures | Target |

|---|---|---|

AI Mention Rate | % of queries where your brand appears | Track weekly trends |

Citation Rate | How often AI engines cite your content | Benchmark against competitors |

Share of Voice | Visibility relative to competitors | Aim for category leadership |

AI Referral Traffic | Sessions from AI platforms | Monitor growth rate |

Revenue Attribution | Pipeline from AI-driven visitors | Connect to CRM |

The global AI SEO software market is projected to reach $4.97 billion by 2033, up from $1.99 billion in 2024. This growth reflects the strategic importance of AI visibility.

Real-World Benchmarks

Case studies demonstrate measurable impact:

One SaaS HR client saw a 300% increase in AI traffic after refreshing outdated content

Over 80% of AI-driven traffic went to pages updated within the last two years

An e-commerce retailer achieved 20x increase in revenue from AI by treating AI as a primary discovery channel

In 4 months, one brand's AI visibility increased 8x from about 6% at onboarding to more than 50% consistently

The average age of URLs cited by AI assistants is 1064 days, compared to 1432 days for URLs in organic SERPs, making AI citations 25.7% "fresher" than traditional search.

Perplexity cites approximately 2.8x more sources per query than ChatGPT (averaging 21+ citations vs ~8), and those sources tend to be more recent. This means fresh, comprehensive content has more opportunities to appear.

Key Takeaways & How Relixir Automates the Heavy Lifting

Content refresh in 2026 requires four interconnected practices:

Detect decay fast with automated monitors that catch ranking drops before quarterly audits

Prioritize pages using AI-driven scoring that blends traffic, intent alignment, and freshness gaps

Execute surgical rewrites that add updated data, schema markup, and entity depth while preserving URL equity

Track AI visibility metrics including citation share, answer position, and revenue lift to feed the next refresh cycle

For teams seeking to automate this process, Relixir offers a GEO-native CMS with autonomous refresh capability that continuously scans your entire content library for outdated information. When your product releases new features, updates pricing, or changes positioning, the platform automatically identifies all affected content and refreshes it to maintain accuracy.

The platform auto-syncs with your knowledge base, including product specs, documentation, release notes, and pricing pages. When that source changes, all dependent content updates automatically, eliminating the content debt that accumulates in traditional CMS platforms.

For B2B companies looking to dominate AI search visibility, building systems that detect, prioritize, execute, and monitor content freshness is no longer optional. It is the new standard for maintaining competitive advantage in 2026.

Frequently Asked Questions

Why is content refreshing important in 2026?

Content refreshing is crucial in 2026 due to the dominance of AI search engines like ChatGPT and Google AI Overviews, which prioritize fresh, accurate content for citations and visibility.

How do AI search engines evaluate content freshness?

AI search engines evaluate content freshness by checking the recency of updates, with platforms like Perplexity citing more recent sources. They also use schema markup to verify update dates.

What are common mistakes in content refreshing?

A common mistake is changing dates without substantive updates, which can lead to penalties. Effective refreshing involves updating content with new data and maintaining authority.

How does Relixir help with content refreshing?

Relixir automates content refreshing by continuously scanning for outdated information and syncing updates with your knowledge base, ensuring content remains accurate and visible in AI search.

What is the role of schema markup in content visibility?

Schema markup, especially JSON-LD, is crucial for content visibility as it helps AI systems understand update recency and content structure, increasing the likelihood of being cited in AI-generated responses.

Sources

https://www.qwairy.co/blog/content-freshness-ai-citations-guide

https://searchatlas.com/research/url-freshness-in-llm-generated-answers/

https://www.cognizo.ai/customers/hat-club-turned-ai-visibility-into-20x-revenue

https://www.airops.com/blog/ai-agents-content-monitoring-refresh

https://www.digitalapplied.com/blog/schema-markup-ai-generation-guide-2026

https://www.growthmarshal.io/field-notes/how-to-use-endpoints-to-drive-llm-citations

https://docs.cloud.google.com/generative-ai-app-builder/docs/index-refresh-sitemap

https://www.searchinfluence.com/blog/ai-seo-tracking-tools-2026-analysis-platforms/