How To Keep New Blog Content Fresh and Current: A Guide

Keeping blog content fresh requires systematic monitoring for content decay, substantive updates beyond date changes, and proper technical signals like schema markup. Research shows 68% of AI Overview citations come from content updated within the last 12 months, while AI systems automatically add the current year into 28.1% of sub-queries even when users don't specify it.

TLDR

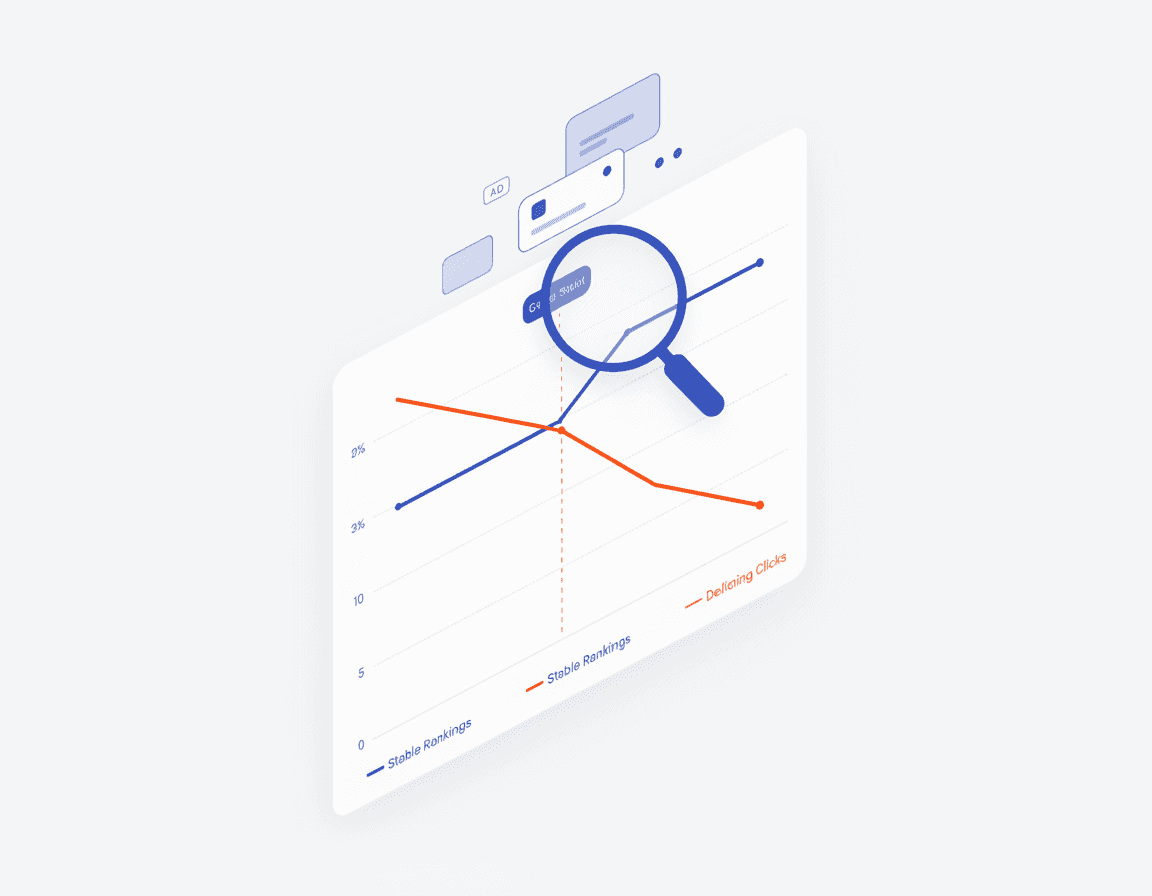

Content decay detection: Monitor declining clicks despite stable rankings and dropping CTR to identify pages needing updates before traffic disappears

Substantive refresh framework: Follow a 6-step process (identify, score, audit, update, re-signal, monitor) that produces 3-5x higher ROI than creating new content

Technical freshness signals: Implement both

datePublishedanddateModifiedin Schema.org markup to communicate updates to AI systemsUpdate vs republish decisions: Keep URLs stable for pages with existing authority; only republish when fundamental restructuring is needed

AI citation tracking: Monitor both traditional SEO metrics and AI-specific visibility to measure refresh impact across all discovery platforms

In the AI-search era, brands that keep blog content fresh dominate answer boxes and citation panels. With AI systems automatically adding the current year into 28.1% of sub-queries even when users don't specify it, freshness has become a decisive ranking factor. This guide shows exactly how to keep blog content fresh without burning out your team.

Why Must You Keep Blog Content Fresh in the AI-Search Era?

"Freshness is a built-in signal that boosts timely content for queries where recency matters," according to 201 Creative's analysis. But what exactly makes freshness so critical today?

AI-powered answers increase demand for current sources, meaning well-dated, updated pages get more visibility. When ChatGPT, Perplexity, or Google AI Overviews generate responses, they increasingly favor content that demonstrates recent, substantive updates.

The stakes are significant. Research shows that 67% of marketing and CX leaders say keeping content fresh and updated has become "significantly more important" for both SEO and generative-AI answer visibility.

There's also a trust dimension. AI overviews and answer boxes promise quick, confident summaries. If those summaries cite outdated information, trust collapses. Your brand reputation suffers when AI tools spread incorrect information from your old content.

How Can You Spot Content Decay Before Traffic Disappears?

Content decay is the gradual decline in organic traffic and search engine rankings for a specific page over time. But here's the challenge: traditional SEO dashboards may show stable rankings while your traffic quietly erodes.

A quarterly audit is a practical minimum, with monthly check-ins for your highest-impact pages like product, pricing, and key lead-gen assets.

Key signals to monitor:

Declining clicks despite stable rankings: This often indicates hidden content decay

Dropping CTR for top queries: Fresher competitor pages may be stealing attention

Reduced impressions: Search engines may be showing your content less frequently

Outdated information flags: 51% of pages that lost traffic had outdated information

You get a higher ROI by updating existing URLs than by publishing entirely new posts. The existing authority and indexing of the URL work in your favor.

Hidden Content Decay in Stable Rankings

Hidden content decay is what happens when your organic traffic quietly erodes, even though your rankings in traditional SEO dashboards appear stable. This phenomenon has become increasingly common as search results add more ads, rich features, and AI-generated summaries that siphon clicks away from classic blue links.

Consider this example: A high-intent article might hold a stable average position of 3.1 for its main query, but clicks drop 30-40% over six months while impressions and SERP layout change dramatically.

How do you detect this hidden decay? Google Search Console is your primary tool. Look for:

Meaningful drop in clicks and/or impressions

Flat or slightly improved average position

Declining CTR for one or more top queries

A typical SaaS team ships 4-8 changes per week, and each change affects 2-5 articles. That means 16-40 articles per month may need updates. Without systematic monitoring, this creates substantial content debt.

What Framework Refreshes Content for AI Citations?

A six-step framework—identify, score, audit, update, re-signal, monitor—consistently produces 3-5x higher ROI than creating new content by using existing authority signals and backlinks. A structured framework changes the question from "What should we fix next?" to "Which updates will create the most business impact?"

"Refreshing old content is not just about updating the date; it's about making the content relevant to today's audience and search engines," notes BlogSEO.io.

Here's a practical refresh workflow:

Identify decay candidates: Use GA4 and GSC data to find pages with declining performance

Score and prioritize: Rank pages by business impact and refresh difficulty

Audit content: Check for outdated statistics, deprecated features, and stale examples

Add substantive updates: Content updated with 500+ new words of meaningful content saw 43% average traffic increases

Update technical signals: Refresh schema markup, internal links, and metadata

Monitor results: Track improvements within 30-60 days

Pages updated with strategic freshness tactics saw 43% higher AI Overview visibility. Meanwhile, 68% of AI Overview citations come from content updated within the last 12 months.

For teams managing large-scale content libraries, platforms like Relixir offer autonomous agents that scan for outdated information and maintain freshness automatically—cutting manual update time by up to 80% while ensuring continuous AI search visibility.

Safely Republishing vs Substantial Updates

Republishing content is the process of taking a piece of content that has already been published and publishing it again, either on the same platform or a different one. But when should you republish versus simply update?

When to update in place:

Core information remains relevant

Page has existing backlinks and authority

Minor to moderate changes needed

When to republish:

Content needs fundamental restructuring

Topic angle has shifted significantly

Targeting entirely new keywords

Critical warning: Do not fake recency. Inaccurate dates or trivial edits can erode trust and hurt performance. Updating old content can lead to a 111% increase in traffic, but only when the changes are substantive.

You should always update the content to ensure it's still accurate and relevant before republishing. Keep URLs stable and protect sections that earned links to preserve your existing authority.

Which Technical Signals Tell Search Engines Your Post Is Fresh?

Beyond content quality, technical signals play a crucial role in communicating freshness to both traditional search engines and AI systems.

Google explicitly recommends using both datePublished and dateModified in your Schema.org markup. This machine-readable data helps AI systems understand when your content was last substantially updated.

Essential technical freshness signals:

Signal | Purpose | Implementation |

|---|---|---|

| Machine-readable update date | ISO 8601 format in JSON-LD |

Visible byline dates | Human-readable freshness indicator | Show both publish and update dates |

Sitemap lastmod | Crawl prioritization | Update when content changes |

Internal linking | Authority distribution | Link from fresh content to updated pages |

Search engines like Google use byline dates as a signal to determine content freshness, which can impact search rankings. However, historically Google said they ignored structured data which was not used to markup visible content.

The solution? Ensure your schema dates align with visible dates on the page. Implementing schema markup can lead to a 30% increase in click-through rates when done correctly.

Internal linking remains one of the most powerful yet underutilized SEO strategies in 2026. When you update content, refresh internal links to and from that page to signal relevance across your site.

How Can AI Agents Automate Blog Freshness?

Manual content maintenance doesn't scale. A typical content library requires constant attention as products evolve, statistics age, and competitor content improves.

AI documentation agents can read your codebase, analyze your support tickets, and monitor your changelogs to keep your knowledge base up to date automatically. These aren't simple chatbots—they're agents that actively maintain your content.

Modern AI-powered content refresh tools offer several capabilities:

Decay detection: Automatically identify pages with declining performance

Content analysis: Flag outdated statistics, deprecated features, and stale references

Update suggestions: Generate refresh recommendations based on current data

Performance tracking: Monitor results across both traditional search and AI platforms

The content refresh tool runs a 6-step workflow that compares 28-day windows, scores decay, clusters topics, and builds a 7-day plan. Inputs include GA4 URL traffic, GSC query performance, and your current page inventory.

Successful refreshes show measurable improvements in traffic, rankings, and AI visibility within 30-60 days of implementation.

For enterprise-scale content operations, Relixir's autonomous refresh continuously syncs with your knowledge base—product specs, pricing pages, documentation—so when your source of truth changes, all dependent content updates automatically. This eliminates the content debt that accumulates when teams manually manage hundreds or thousands of pages.

Track AI Citations Before & After a Refresh

To see if a refresh helped in LLMs, track mentions and citations across AI platforms. Traditional SEO metrics alone won't tell the full story.

Key metrics to monitor:

AI mention rate: How often your brand appears in AI-generated responses

Citation frequency: How often AI systems cite your content as a source

Position in AI responses: Where you appear relative to competitors

AI referral traffic: Visitors arriving from AI search platforms

Internal and external citations are complementary: internal serves as a fallback when retrieval fails or is disabled, while external helps when internal knowledge is incomplete or missing.

Refreshing outdated content led to a 300% increase in AI traffic for one client and a 54% increase in GPT-User bot hits for another. Over 80% of AI-driven traffic went to pages updated within the last two years.

Key takeaway: Track both traditional SEO metrics and AI-specific visibility metrics to understand the full impact of your content refreshes.

Conclusion: Continuous Freshness Wins AI-Search

Keeping blog content fresh is no longer optional—it's essential for visibility in both traditional search and AI-powered discovery. The data is clear: AI systems favor recent, well-maintained content, and brands that establish systematic refresh processes will dominate their categories.

Key takeaways from this guide:

Freshness is a front-row ranking signal in an AI-first world

Hidden content decay can erode traffic even when rankings appear stable

Substantive updates (not just date changes) drive real results

Technical signals like schema markup communicate freshness to AI systems

AI-powered tools can automate decay detection and refresh prioritization

Use correct dates, structured data, sitemaps with lastmod, and rapid indexing tools to surface updates faster. Build a systematic framework rather than relying on ad-hoc updates.

For teams looking to automate content freshness at scale, Relixir offers autonomous content refresh capabilities that continuously scan your entire content library for outdated information, automatically syncing with your knowledge base to maintain accuracy and AI search visibility.

Frequently Asked Questions

Why is content freshness important in the AI-search era?

Content freshness is crucial because AI-powered search engines prioritize recent and updated content for generating accurate and relevant responses. Fresh content helps maintain visibility in AI-generated answer boxes and citation panels.

How can you detect content decay before it affects traffic?

Content decay can be detected by monitoring key signals such as declining clicks despite stable rankings, dropping CTR for top queries, and reduced impressions. Regular audits using tools like Google Search Console can help identify these issues early.

What is the framework for refreshing content for AI citations?

The framework involves identifying decay candidates, scoring and prioritizing them, auditing content, adding substantive updates, updating technical signals, and monitoring results. This structured approach ensures higher ROI and improved AI search visibility.

When should you republish content versus updating it?

Republish content when it requires fundamental restructuring, a significant shift in topic angle, or targets new keywords. Update in place if the core information remains relevant and only minor to moderate changes are needed.

How does Relixir help in maintaining content freshness?

Relixir offers autonomous agents that scan for outdated information and maintain content freshness automatically, reducing manual update time by up to 80% and ensuring continuous AI search visibility.

Sources

https://www.qwairy.co/blog/content-freshness-ai-citations-guide

https://201creative.com/why-freshness-matters-ai-search-ranking-algorithms/

https://ferndesk.com/blog/how-to-keep-knowledge-base-up-to-date-automatically

https://www.blogseo.io/blog/refresh-old-content-ai-era-practical-guide

https://seohq.github.io/schema-markup-advanced-implementation

https://topicalmap.ai/blog/auto/internal-linking-strategy-guide-2026