How To Keep Your CMS Content Up to Date: A Guide

Keeping CMS content up to date requires regular audits, automated refresh cycles, and structured data implementation. Modern approaches combine AI agents for content generation with human oversight for accuracy, while health scores track freshness signals including traffic, rankings, and AI citation status. Pages cited by AI assistants are 25.7% fresher than traditional search results, making content currency essential for visibility.

TLDR

AI search engines prioritize fresh content, with cited URLs averaging 1,064 days old versus 1,432 days for traditional organic results

Content health scores combining traffic, rankings, backlinks, and AI inclusion help prioritize which pages need updating first

Automated refresh workflows using AI agents can accelerate content updates while humans validate facts and add expertise

81% of AI-cited pages use schema markup, with JSON-LD being the recommended format for machine readability

Syncing external sources like knowledge bases and documentation ensures content stays consistent across all platforms

New analytics tools can track AI referral traffic, with ChatGPT accounting for over 80% of AI-driven visits to websites

Keeping CMS content up to date is no longer optional. As AI search engines like ChatGPT, Perplexity, and Google AI Overviews reshape how buyers discover products, stale pages get deprioritized or ignored entirely. Fresh, well-structured content now determines whether your brand appears in both traditional SERPs and AI-generated answers.

This guide walks you through the practical steps for maintaining content freshness, from auditing your library to automating refresh cycles and measuring AI traffic. Whether you manage a handful of blog posts or hundreds of thousands of pages, these workflows will help you stay visible where it matters most.

Why Up-to-Date Content Is the New SEO—and GEO—Baseline

The rules of search have changed. "AI Overviews and other generative features don't just list links; they assemble direct answers by reading, summarizing, and cross-checking multiple sources in real time," explains Single Grain.

This shift means content freshness directly impacts whether your pages get cited. AI systems now automatically add the current year into 28.1% of sub-queries even when users do not include it in their original prompt. The bias toward recent content is baked into how these systems retrieve information.

The data confirms the preference is real. URLs cited by AI assistants are 25.7% fresher on average than those appearing in traditional organic results. ChatGPT shows the strongest preference for fresh content, ordering its references from newest to oldest.

Generative Engine Optimization, or GEO, is about making your content easy for AI-driven features to find, trust, and quote. The fundamentals of good SEO still apply, but the shift is in how that work gets surfaced. Instead of competing for position one on a results page, you are competing for the AI pull-quote.

Key takeaway: Content that was strong six months ago may already be losing ground in AI search. Freshness is now a ranking signal you cannot ignore.

How to Audit Your Library: Content Health Scores & Gap Analysis

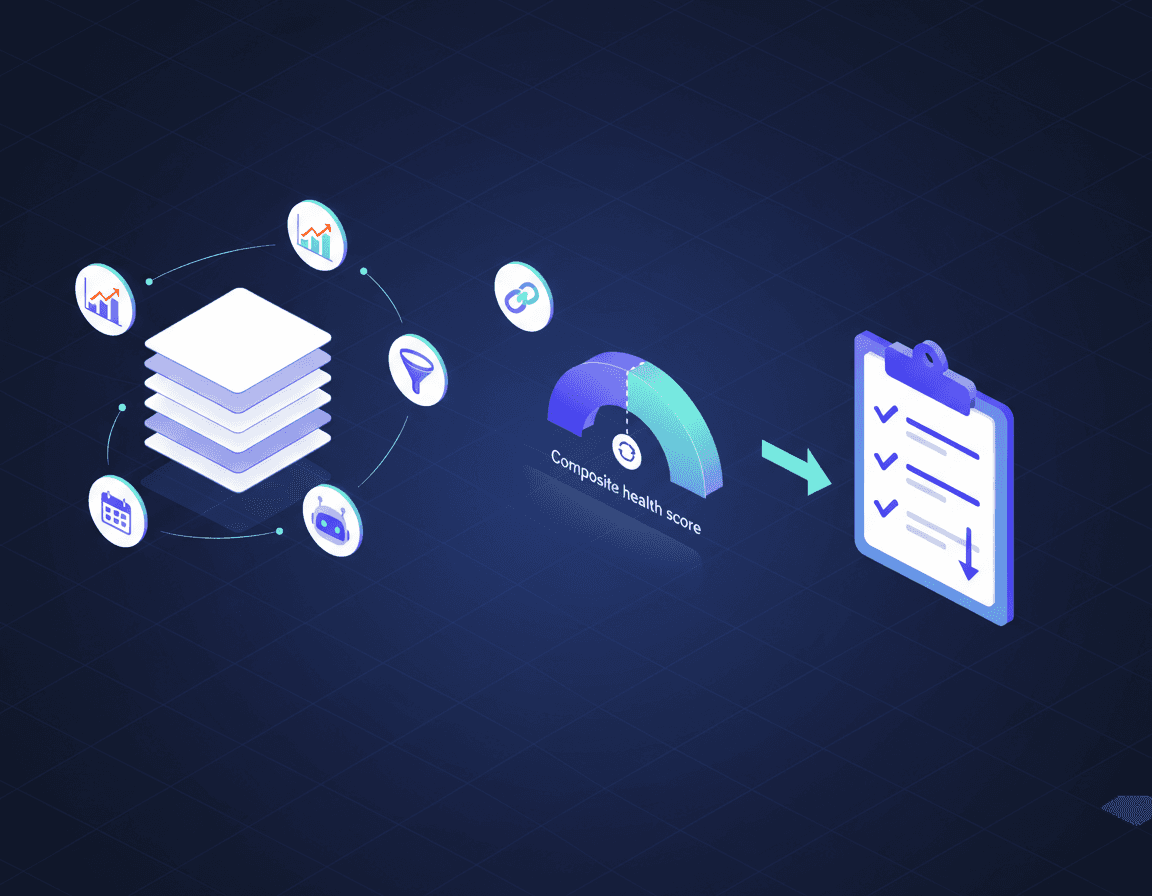

Before you can fix outdated content, you need to find it. A content health score aggregates multiple signals into a single, sortable metric that helps you prioritize updates.

According to Single Grain, an effective health score combines:

Traffic trajectory (rising or falling)

Ranking changes on target queries

Backlink profile

Conversion impact

Content age

Current AI Overview inclusion

The average age of URLs cited by AI assistants is 1,064 days, compared to 1,432 days for organic SERPs. AI assistants also prefer content that has been updated more recently, with an average time since last update of 909 days versus 1,047 days for organic results.

Google AI Overviews have a 70% chance of changing from one observation to the next, with content tending to change every 2.15 days on average. This volatility creates ongoing opportunity for pages that stay current.

Building Your Audit Workflow

Step | Action | Tool Examples |

|---|---|---|

1 | Export all pages with last-modified dates | CMS export, Screaming Frog |

2 | Pull traffic and ranking data | Google Search Console, analytics platform |

3 | Check AI Overview inclusion | GEO analytics tool, manual spot checks |

4 | Calculate composite health score | Spreadsheet or dedicated platform |

5 | Prioritize pages below threshold | Sort by score, traffic potential |

Pages that score below your threshold feed into your refresh pipeline. Anything with high traffic but declining rankings or missing from AI results deserves immediate attention.

Automate Refresh Cycles with AI Agents: Workflow Guide

Manual content updates do not scale. When you have hundreds or thousands of pages, automation becomes essential.

AI agents can accelerate the refresh process significantly. The recommended approach is to "use AI to accelerate ideation and section rewrites, then apply SME judgment to validate facts, inject examples, and add unique insights that demonstrate experience," according to Single Grain.

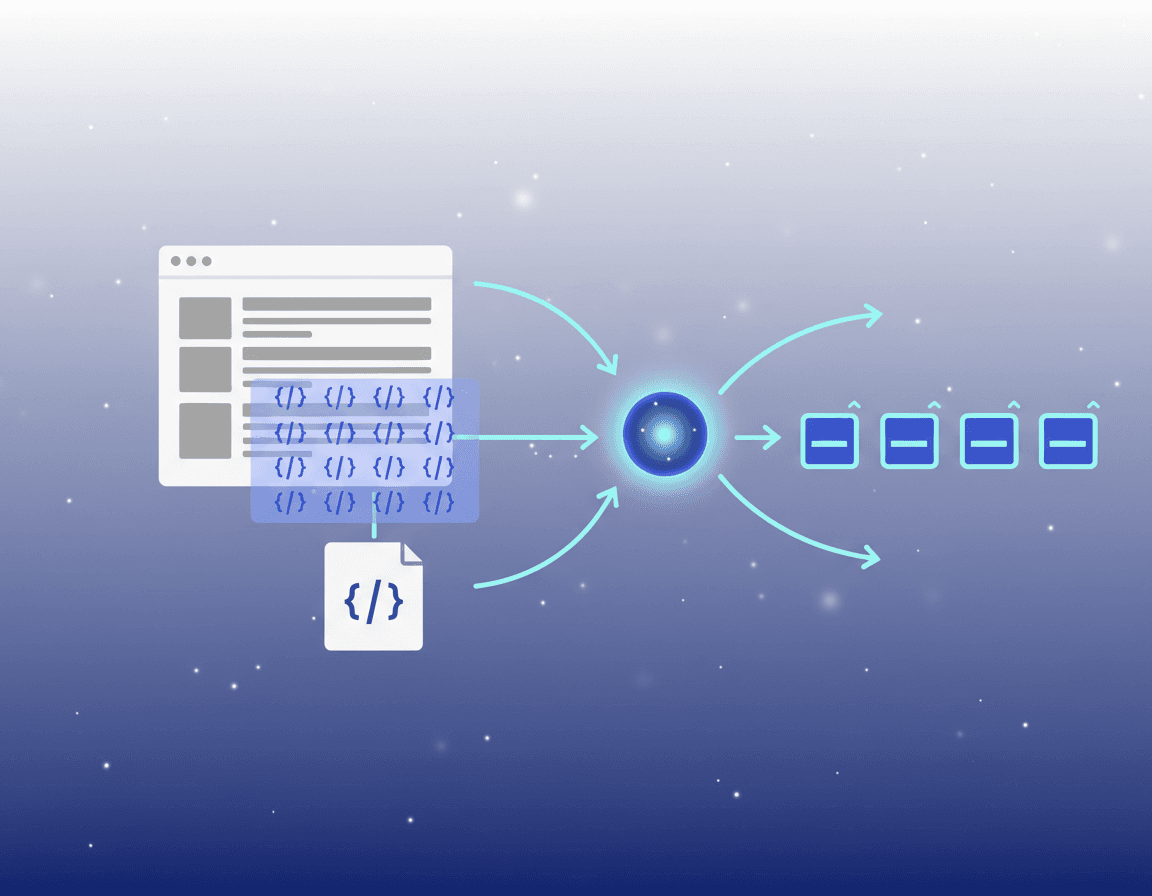

Modern CMS platforms are building this capability directly into their systems. Hygraph AI Agents are "intelligent automations that can be assigned to specific steps in your content workflows." These agents use large language models to understand context, make decisions, and execute content tasks autonomously while maintaining enterprise-grade governance.

Sample Refresh Workflow

Detection: Automated scan identifies pages below health score threshold

Triage: System categorizes refresh type (statistics update, full rewrite, structural changes)

Draft: AI generates updated sections based on current data and style guidelines

Review: Human editor validates facts and adds original insights

Publish: Updated page goes live with refreshed

dateModifiedschemaMonitor: Track ranking and citation changes post-refresh

A global eCommerce company uses Hygraph's Translation Agent to automatically localize product descriptions into 12 languages as part of their approval workflow. Similar automation applies to content refresh at scale.

Keep a Human-in-the-Loop for E-E-A-T

Automation accelerates execution, but it cannot replace expertise. "AI accelerates re and drafting, but humans lock in E-E-A-T and originality," notes Single Grain.

AI-generated content should be fact-checked and edited by humans to ensure accuracy and quality. Google has stated that AI-generated content is not against its guidelines as long as it is useful and created for people first. The risk comes from publishing unverified information that damages trust.

Governance matters especially for:

Medical, legal, or financial content

Product specifications and pricing

Customer testimonials and case studies

Any claims that could be independently verified

Embed brand voice rules, SEO guardrails, and editorial checklists directly into the generation step to maintain consistency.

Add Machine-Readable Structure: Schema, JSON-LD & Fact Endpoints

Fresh content means nothing if AI cannot parse it. Structured data signals both freshness and authority to search engines and language models.

The numbers are compelling: 81% of AI-cited pages use schema markup. Pages with structured data are up to 40% more likely to appear in AI summaries and citation positions.

JSON-LD (JavaScript Object Notation for Linked Data) is Google's recommended format because it separates schema from HTML, making it easier to maintain. The most citation-worthy schema types include:

FAQPage: Highest citation probability among common types

Article: Essential for blog posts and guides

Organization: Establishes entity identity

HowTo: Matches instructional queries

Product: Critical for e-commerce

Google explicitly recommends using both datePublished (original) and dateModified (last substantive update) in your Schema.org markup. This signals freshness to AI models in a machine-readable, verifiable format.

Dedicated Fact Endpoints

For maximum AI visibility, consider publishing a dedicated JSON-LD endpoint. As Growth Marshal explains, "A JSON-LD fact endpoint flips the script. It's a dedicated route—/facts.jsonld, /schema, or even versioned paths like /v1/ontology—that outputs a machine-readable document unpolluted by CSS cruft or marketing scripts."

The sameAs property connecting your organization schema to Wikipedia, Wikidata, and LinkedIn dramatically increases AI trust. You are essentially building your entity presence in AI knowledge systems.

Warning: Changing dateModified or publication dates without making substantive changes is considered date manipulation and is explicitly warned against by Google.

How to Sync External Sources & Headless Endpoints

Content often lives in multiple places: your CMS, help center, knowledge base, documentation site, and internal wikis. Keeping everything synchronized reduces maintenance burden and ensures AI crawlers always find your latest information.

External source connections reduce maintenance by syncing automatically. According to Conveyor's documentation, "Public sources refresh weekly, private sources refresh daily—no manual reuploads needed."

A Content Operating System approach unifies creation, governance, automation, and real-time delivery so latency is engineered out of the pipeline, not patched after the fact.

Sync Architecture Options

Source Type | Refresh Frequency | Use Case |

|---|---|---|

Public help center | Weekly | Customer-facing documentation |

Confluence/Notion | Daily | Internal knowledge base |

Google Drive | Daily | Policy documents, guides |

Product database | Real-time | Pricing, specifications |

CRM/Support tickets | On-demand | FAQ updates, common issues |

When your product releases new features, updates pricing, or changes positioning, all dependent content should update automatically. This eliminates the content debt that accumulates when source-of-truth changes are not propagated.

Platforms like Faqprime natively integrate with various knowledge base tools to sync and pull content into the CMS at a pre-configured frequency. The goal is making updates in one place and having them flow everywhere.

Measure What Matters: AI Traffic & Citation Analytics

You cannot improve what you do not measure. Traditional analytics tools were not built to track AI referral traffic, but new solutions are filling the gap.

Matomo Cloud now includes a new referrer channel type called AI Assistant that makes it easier to identify and segment traffic arriving from AI tools such as ChatGPT, Copilot, Gemini, Claude, and Perplexity.

For more precise detection, Loamly's Managed Proxy offers 100% accuracy by using RFC 9421 cryptographic signatures, the same standard used by OpenAI, Anthropic, and Google for their AI agents. The JavaScript tracker option provides 75-90% accuracy using behavioral and timing methods.

The stakes are high. ChatGPT is the top AI referrer, accounting for over 80% of AI traffic to websites. Understanding which pages receive AI citations and which do not reveals exactly where your refresh efforts should focus.

Key Metrics to Track

AI Mention Rate: Percentage of relevant queries where your brand appears

Citation Rate: How often AI engines cite your content as a source

Share of Voice: Your visibility relative to competitors for target keywords

AI Referral Traffic: Visits originating from AI platforms

Citation Freshness: Age of your cited content versus competitors

Combining AI traffic data with share-of-voice dashboards shows whether your refresh cadence is converting into actual citations and high-intent clicks.

Traditional vs. Agentic CMS: Which Platform Keeps Content Fresher?

Your choice of content management system directly affects how easily you can maintain freshness at scale.

Traditional CMS platforms were built for a different era. They require human effort for every piece of content, from ideation to writing to publishing. Modern content management systems represent a fundamental shift in how operations work by delivering content via API.

The market is moving rapidly toward agentic architectures. The headless CMS market is projected to grow from USD 3.94 billion in 2025 to USD 22.28 billion by 2034, with a CAGR of 21.42%.

Platform Comparison

Capability | Traditional CMS | Headless CMS | Agentic CMS |

|---|---|---|---|

Content publishing | Manual | Manual via API | Autonomous |

Refresh cycles | Manual | Semi-automated | Fully automated |

AI visibility monitoring | Third-party tools | Third-party tools | Built-in |

Multi-channel delivery | Limited | Native | Native |

Schema generation | Plugins | Custom code | Automatic |

Contentful pioneered headless CMS and remains the enterprise choice with robust features and reliability. Sanity provides a customizable studio that you can tailor to your exact needs. Strapi is free, open-source, and self-hosted.

The newest category, agentic CMS, goes further. These platforms use AI agents to autonomously handle content audits, localize campaigns, and refresh outdated pages without human handoffs. As Kontent.ai's VP of Marketing noted, "Traffic is down, AI answers are up, and GEO has moved to the boardroom."

For teams serious about content freshness, the platform choice matters. An agentic approach can reduce the time to audit and optimize content by up to 80% while improving organic and answer engine reach.

Key Takeaways & Next Steps

Maintaining fresh CMS content is now essential for visibility in both traditional search and AI-generated answers. Here is your action plan:

Audit your content library using a composite health score that weighs traffic, age, and AI citation status

Establish refresh cadences based on content type and freshness sensitivity

Implement automation using AI agents for drafting while keeping humans in the loop for fact-checking

Add structured data including

datePublishedanddateModifiedschema to every pageSync external sources so updates propagate automatically across all platforms

Track AI traffic using dedicated analytics tools that identify chatbot referrers

Evaluate your CMS for native refresh and GEO capabilities

AI search engines increasingly prioritize domain-specific, well-structured content over third-party sources. Your blog is becoming the citation engine for AI search. Companies that establish AI search visibility today will have a significant competitive advantage as the shift from traditional search accelerates.

For teams looking to automate content freshness at scale, Relixir offers a GEO-native CMS with built-in AI agents that autonomously generate and refresh content optimized for LLM citations. The platform continuously scans content libraries for outdated information and auto-syncs with your knowledge base to maintain accuracy.

Frequently Asked Questions

Why is keeping CMS content up to date important for SEO and AI search?

Keeping CMS content up to date is crucial because AI search engines prioritize fresh, well-structured content. Stale content is often deprioritized or ignored, affecting your brand's visibility in both traditional search engine results and AI-generated answers.

What is a content health score and how is it used?

A content health score aggregates various metrics like traffic trajectory, ranking changes, and content age into a single metric. It helps prioritize which pages need updates to maintain visibility in AI search results.

How can AI agents help in automating content refresh cycles?

AI agents can automate the refresh process by identifying outdated content, generating updated sections, and executing content tasks autonomously. This reduces manual effort and ensures content remains current and relevant.

What role does structured data play in AI search visibility?

Structured data, such as JSON-LD schema, signals freshness and authority to AI search engines. Pages with structured data are more likely to appear in AI summaries and citations, enhancing visibility.

How does Relixir's GEO-native CMS support content freshness?

Relixir's GEO-native CMS uses AI agents to autonomously generate and refresh content, ensuring it remains optimized for LLM citations. The platform continuously scans for outdated information and syncs with your knowledge base to maintain accuracy.

Sources

https://ahrefs.com/blog/do-ai-assistants-prefer-to-cite-fresh-content/

https://www.genrank.co/blog/json-ld-schema-the-secret-language-ai-engines-understand

https://www.qwairy.co/blog/content-freshness-ai-citations-guide

https://storyblok.com/mp/how-to-optimize-for-generative-engine-optimization-with-a-headless-cms

https://www.growthmarshal.io/field-notes/how-to-use-endpoints-to-drive-llm-citations

https://headlesscms.guide/guides/reducing-content-api-latency

https://www.faqprime.com/help/335/sync-your-knowledge-base-content-automatically-with-faqprime

https://thedigitalbloom.com/learn/2025-ai-citation-llm-visibility-report/