Track AI Citations Across Multiple Domains with GEO-Native CMS

Tracking AI citations across multiple domains requires a GEO-native CMS that centralizes monitoring through unified dashboards, structured data endpoints, and automated refresh cycles. Companies using this approach see AI inclusion rates increase from 8% to 24% within 90 days, while reclaiming first-page placement for 68% of target queries.

Key Takeaways

• Three core metrics define AI visibility: Share of AI Voice (brand mentions in answers), Citation Share (domain citations vs. total), and Recommendation Rate (appearance in "best tools" lists)

• Multi-domain challenges include fragmented data silos, inconsistent refresh cycles, and no centralized visibility across different CMS platforms

• Reddit mentions heavily influence citations, with sites having 10M+ Reddit mentions averaging 7 AI citations per 100 queries compared to 1.8 for minimal presence

• GEO-native CMS solutions auto-generate structured data endpoints like /facts.jsonld that LLMs can reliably access for citation

• Measurable results include 288% ROI from citation optimization, with qualified leads converting at 2.8x higher rates

• Implementation requires JSON-LD endpoints, visitor deanonymization for revenue attribution, and alerts for brand safety monitoring across ChatGPT, Perplexity, Claude, and Gemini

The shift from click-based SEO to answer-based discovery has fundamentally changed how brands compete for visibility. AI citation tracking is now the playbook for winning recommendations from ChatGPT, Perplexity, Claude, and Google AI Overviews.

Instead of chasing rankings on traditional search results pages, marketing teams must monitor and optimize how AI systems mention, cite, and recommend their brands across every domain they operate. This guide walks through the metrics that matter, the challenges of multi-domain tracking, and how a GEO-native CMS centralizes citation management to drive measurable pipeline results.

Why Does AI Citation Tracking Matter More Than Traditional Rankings?

"AI didn't 'kill SEO.' It changed what visibility means -- and it changed what leadership should measure." That observation from the GEOL.AI visibility guide captures why AI citation tracking demands board-level attention.

In 2025, the center of gravity moved from click-driven discovery to answer-driven discovery. Users now ask AI assistants hyper-specific questions, and those assistants assemble responses by citing trusted sources. Seer Interactive reported that organic CTR dropped 61% and paid CTR dropped 68% for informational queries featuring Google AI Overviews.

The commercial implications are staggering. AI-referred sessions jumped 527% between January and May 2025, according to Frase.io research. ChatGPT now owns 84.2% of AI referrals, growing 3.26x year-over-year to establish itself as the default AI discovery interface.

Generative Engine Optimization is the discipline of designing and maintaining content so that large language models can accurately retrieve, summarize, and cite it. Brands that master this discipline capture high-intent buyers who convert at rates traditional SEO cannot match.

Key takeaway: Visibility has shifted from "rank" to "representation." You win when you are mentioned, cited, and recommended in the answer the user consumes -- not when you merely rank #1.

What Counts as an AI Citation? Core Metrics & Definitions

LLM "citations" are becoming a new kind of visibility: not a blue-link position you can track in a rank tool, but a source endorsement embedded inside the answer layer. Understanding the metrics that define this new landscape is essential for any team serious about AI search.

AI Visibility Monitoring (AIVM) has become a board-relevant capability. It is the operational discipline of tracking how AI systems represent your brand: what they say, whether they cite you, what sources they trust, and how often you are positioned as the recommended option.

The 2026 AI Visibility Benchmark Report analyzed 50,000+ AI queries across 15 industries. Key findings reveal the concentration of AI attention:

Market leaders average 31% Share of Model across all platforms

Top 3 brands capture 67% of all AI mentions in their category

Perplexity cites the most sources, averaging 5.2 per response

Share of AI Voice, Citation Share & Recommendation Rate

Three KPIs form the foundation of AI visibility measurement:

Metric | Formula | What It Predicts |

|---|---|---|

Share of AI Voice (SoAIV) | (# answers that mention your brand) / (total answers in the query set) | Brand awareness in AI channels |

Citation Share | (# citations to your domain) / (total citations across answers) | Content authority and trust |

Recommendation Rate | (% of "best tools/vendors" answers where you appear in top N) | Pipeline generation potential |

These formulas come directly from AIVM methodology research and predict pipeline because they measure the moments when buyers are actively seeking solutions.

Why Is Tracking Citations Across Many Domains So Hard?

AI answers are increasingly assembled from what the web says right now. If your pages are stale, incomplete, or inconsistent, modern search-and-answer systems can quote, summarize, and cite that outdated information at scale, turning a routine content maintenance issue into a visibility, trust, and revenue risk.

The challenges compound when brands operate across multiple domains:

Fragmented data silos: Each domain may use different CMS platforms, making unified tracking nearly impossible

Inconsistent refresh cycles: Some domains get updated weekly; others languish for months

No centralized visibility: Marketing teams cannot see which domains are earning citations and which are being ignored

LLMs disproportionately cite what they can reliably access and re-access. A domain with broken links, outdated pricing, or deprecated feature descriptions will be deprioritized or ignored entirely.

Citation optimization is not a subset of SEO; it is a parallel discipline with different failure modes. Brands need infrastructure that treats content freshness and structured data as first-class concerns across every domain they control.

Domain Signals: Why Reddit Mentions Skew Citations

Perplexity's citation patterns skew heavily toward Reddit, with nearly 46.7% of top sources coming from the platform. This creates a significant challenge for brands that lack community presence.

Reddit is now the most cited domain by AI platforms at approximately 40% citation rate, meaning the insights brands gather and act on directly improve visibility in ChatGPT, Perplexity, and Google AI Overviews.

Sites with 10 million or more Reddit mentions average 7 AI citations per 100 relevant queries, compared to just 1.8 for sites with minimal Reddit presence. The relationship follows a logarithmic curve where each order of magnitude in mention volume produces a roughly 1.3 -- 1.5x improvement in citation rate.

This Reddit bias means brands must track not only their owned domain citations but also their presence in community discussions that AI models treat as trust signals.

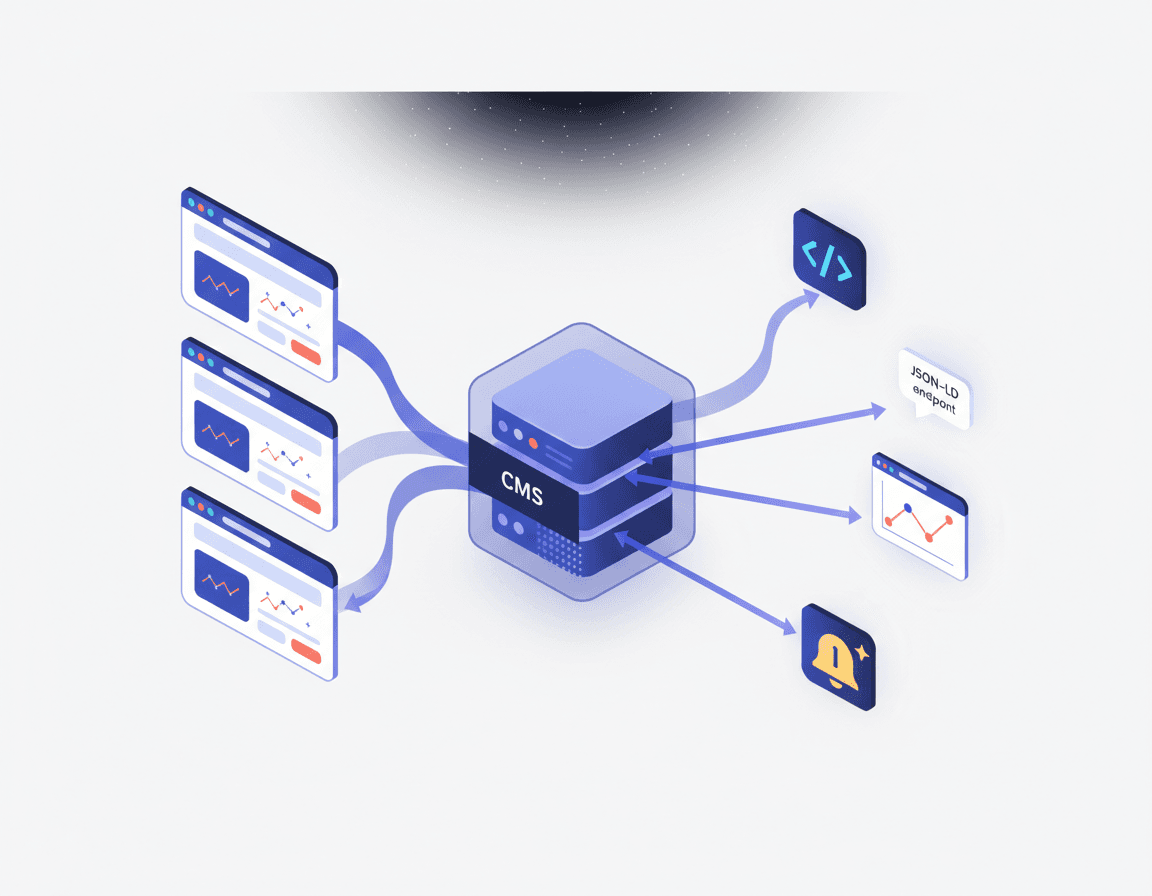

How Does a GEO-Native CMS Centralize Citation Tracking Across Domains?

A GEO-native CMS enables companies to create any content collection -- articles, case studies, guides, product comparisons -- and then generate and refresh unlimited items within those collections. This collection-based architecture is essential for LLMs to cite when answering buyer questions.

Platforms like Relixir ship an agentic CMS that auto-generates structured data endpoints for every domain you connect. A JSON-LD fact endpoint flips the script. It is a dedicated route -- /facts.jsonld, /schema, or even versioned paths like /v1/ontology -- that outputs a machine-readable document unpolluted by CSS cruft or marketing scripts.

Geo tools with visitor deanonymization can increase conversion rates by up to 20%. When combined with citation tracking, this creates a complete picture of which AI-referred visitors convert and which content drives them.

The best platforms provide full-suite analytics for AI search performance across every major platform: ChatGPT, Google AI Overviews, Perplexity, Claude, Gemini, and domain-specific LLMs.

Agentic Refresh & Schema Injection

Metadata Injection is an advanced GEO technique that moves beyond site-wide Schema.org markup. Instead, it embeds specific structured data (JSON-LD) directly associated with individual HTML content blocks using unique fragment identifiers.

A GEO cadence ensures your most important answers remain the freshest, the clearest to summarize, and the most widely corroborated by third-party sources -- key signals for LLM retrieval and citation.

Clarity and extractability win: concise definitions, structured lists, and compact tables are repeatedly pulled into answers. The practical implication: each URL should contain scannable, passage-labeled segments designed for summarization, plus expandable depth for complex questions.

How Do You Implement Dashboards, Endpoints & Alerts?

Publishing a dedicated JSON-LD endpoint is the first step. The proposed llms.txt file is essentially a treasure map for language-model crawlers: a root-level Markdown document that curates the URLs you most want LLMs to read at inference time.

Implementation requires attention to provenance and attribution. Publishers should:

Publish a concise public citation block (titles, URLs, short excerpt, author, date) that is rendered for users and indexed by crawlers

Add JSON-LD linting into CI and verify ClaimReview and citation properties against schema.org examples

Track citation fidelity, source-staleness rates, and reviewer override frequency

Visitor deanonymization is a process that allows businesses to identify anonymous website visitors by matching their IP addresses with a database of known users. This capability connects citation tracking to revenue attribution.

Alerting for Brand Safety & Revenue Opportunities

"Monitoring is not just about visibility; it's about brand safety." That warning from GEOL.AI research underscores why teams need proactive alerts.

Week-over-week variance appears in:

Whether a brand appears in "best tools" shortlists

Which sources are cited

The ordering of recommendations

Treat AIVM as an early-warning system. Set alerts for "high-severity inaccuracies" the same way you would for uptime incidents.

What Results Can You Expect? Benchmarks & Case Studies

A B2B SaaS used a GEO agency to increase AI citation rates from 8% to 24% in 90 days, generating 47 qualified leads at 2.8x conversion. The result: over €180K in projected pipeline value from a €16,485 investment, equaling 288% ROI.

Within two quarters of implementing a GEO refresh program, one consumer-electronics firm reclaimed first-page placement for 68% of target queries, reduced bounce rate by 28%, and cut support-driven call volume 14%, modeling a 3.1× content-ROI uplift.

The primary success metric for AI optimization is the AI inclusion rate -- the percent of controlled prompts where your page is cited or linked. After optimization, conversion rates from AI referrals improved from 1.2% to 2.0%, indicating higher-intent traffic.

Best Practices & Common Pitfalls When Scaling to Many Domains

Keeping content fresh and updated ensures it remains relevant and cited by AI systems. Teams scaling across many domains should follow this checklist:

Do:

Implement centralized content refresh schedules synced to your knowledge base

Publish structured data endpoints on every domain

Monitor citations and mentions -- where your brand appears in AI responses

Track Reddit presence since it accounts for 46.7% of Perplexity's top cited sources

Avoid:

Siloed CMS platforms that prevent unified visibility

Manual refresh processes that cannot scale

Ignoring community signals that AI models treat as trust indicators

Assuming Google rankings translate to AI citations (47% of queries show different brand rankings)

The AI-augmented market continues to evolve rapidly, fueled by innovations in AI -- particularly generative AI. Teams must treat citation tracking as an operational discipline, not a one-time audit.

Key Takeaways: Own Your AI Share of Voice Across Every Domain

A GEO cadence ensures your most important answers remain the freshest, the clearest to summarize, and the most widely corroborated by third-party sources -- key signals for LLM retrieval and citation.

To track AI citations across multiple domains effectively:

Deploy AI visibility monitoring across ChatGPT, Perplexity, Claude, and Gemini

Surface the right KPIs: Share of AI Voice, Citation Share, and Recommendation Rate

Publish JSON-LD or llms.txt endpoints on each site

Roll logs into a GEO-native CMS that auto-refreshes stale pages

Connect citation data to revenue through visitor deanonymization

Relixir provides this complete infrastructure through its GEO-native CMS, enabling companies to generate, refresh, and track content optimized for LLM citations across unlimited domains. With built-in AI visibility monitoring, autonomous content refresh, and visitor identification, teams can move from measuring the problem to solving it within a single platform.

The window to dominate AI-search-driven revenue is open now. Brands that establish citation tracking infrastructure today will hold significant competitive advantages as buyer behavior continues shifting toward AI-powered discovery.

Frequently Asked Questions

What is AI citation tracking and why is it important?

AI citation tracking involves monitoring how AI systems mention, cite, and recommend brands across various domains. It's crucial because AI-driven discovery has shifted focus from traditional rankings to being cited in AI-generated answers, impacting brand visibility and conversion rates.

How does a GEO-native CMS help with AI citation tracking?

A GEO-native CMS centralizes citation management by enabling the creation and refresh of content collections across multiple domains. It provides structured data endpoints and analytics for AI search performance, ensuring content is optimized for LLM citations.

What are the key metrics for measuring AI visibility?

Key metrics include Share of AI Voice, Citation Share, and Recommendation Rate. These metrics help assess brand awareness, content authority, and pipeline generation potential in AI channels.

Why is tracking citations across multiple domains challenging?

Tracking citations is difficult due to fragmented data silos, inconsistent content refresh cycles, and lack of centralized visibility. These challenges can lead to outdated information being cited, affecting brand trust and visibility.

How does Relixir's GEO-native CMS enhance AI search visibility?

Relixir's CMS offers autonomous content refresh, AI visibility monitoring, and visitor identification, enabling brands to generate and track content optimized for LLM citations across multiple domains, thus improving AI search visibility and conversion rates.

Sources

https://www.singlegrain.com/geo/content-refresh-cycles-for-ai-driven-content/

https://howtogetmentionedbyai.com/blog/reddit-mentions-ai-search/

https://www.frase.io/blog/what-is-generative-engine-optimization-geo

https://www.knewsearch.com/blog/ai-visibility-benchmark-report

https://auto-post.io/blog/refresh-content-to-secure-ai-answers

https://www.growthmarshal.io/field-notes/how-to-use-endpoints-to-drive-llm-citations

https://relixir.ai/blog/best-geo-tools-with-visitor-deanonymization-2025-comparison

https://agenxus.com/blog/geo-content-refresh-strategy-maintaining-citation-rates

https://www.loamly.ai/blog/comparison-ai-search-visibility-tools-2026-buyers-guide